Large Language Models (LLMs) have demonstrated exceptional performance across diverse domains, but their high inference latency is increasingly becoming a significant constraint. Early Exit has emerged as a promising solution to accelerate inference by dynamically bypassing redundant computational layers within the model.

However, in decoder-only architectures, the efficiency of Early Exit is severely bottlenecked by the 'KV Cache Absence' problem. This issue arises because skipped layers fail to provide the necessary historical states (KV cache) for subsequent tokens, leading to a gap in required information. Existing solutions, such as recomputation or masking, either introduce significant latency overhead or incur severe precision loss, thus failing to bridge the gap between theoretical layer reduction and practical wall-clock speedup.

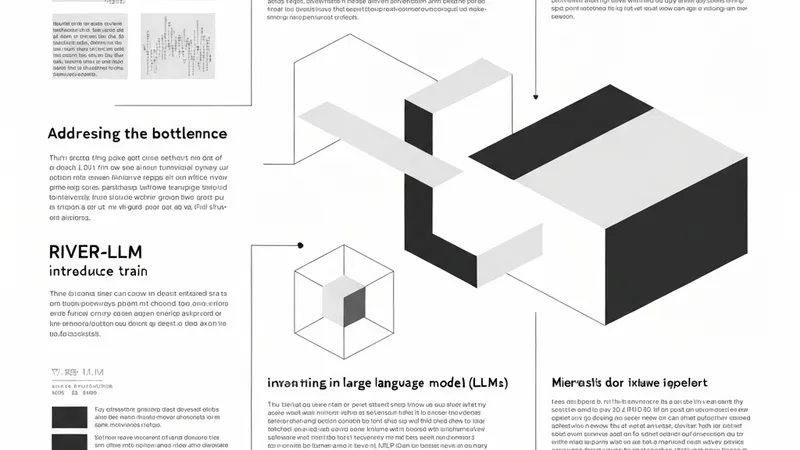

To address this challenge, researchers have proposed River-LLM, a training-free framework designed to enable seamless token-level Early Exit. River-LLM introduces a lightweight 'KV-Shared Exit River' mechanism. This innovation allows the backbone's missing KV cache to be naturally generated and preserved during the exit process, effectively eliminating the need for costly recovery operations.

Furthermore, River-LLM leverages state transition similarity within decoder blocks to predict cumulative KV errors, which then guides precise exit decisions. Extensive experiments on mathematical reasoning and code generation tasks have demonstrated that River-LLM achieves a practical speedup of 1.71 to 2.16 times while consistently maintaining high generation quality.