Hybrid-thinking language models, utilizing explicit "think" and "no-think" modes, often suffer from "reasoning leakage." This means models generate lengthy, self-reflective responses even in "no-think" mode, as current designs fail to cleanly separate the two. Existing solutions like data curation and multi-stage training are limited because both modes share the same feed-forward parameters.

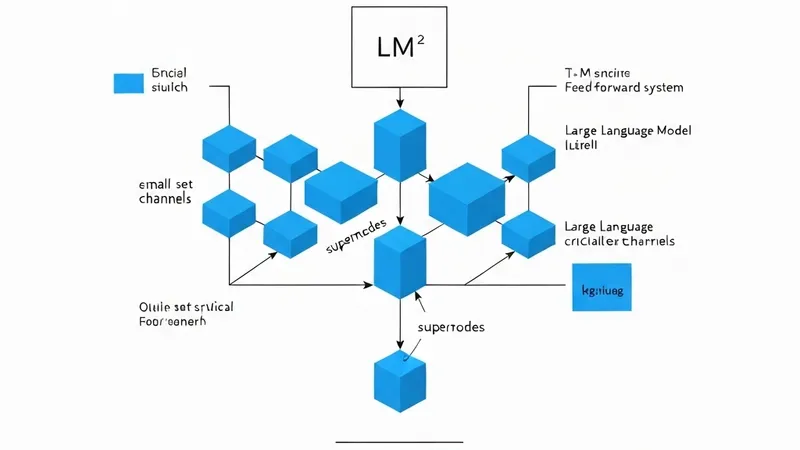

Path-Lock Expert (PLE) offers an architectural solution. It replaces the single MLP in each decoder layer with two semantically locked experts: one for "think" and one for "no-think." Crucially, attention, embeddings, normalization, and the language-model head remain shared. A deterministic control-token router selects a single expert path for the entire sequence, preserving dense model computation, and each expert receives mode-pure updates during supervised fine-tuning.

PLE maintains strong "think" performance while significantly improving "no-think" mode, making it more accurate, concise, and less prone to leakage. On Qwen3-4B, for instance, PLE reduced "no-think" reflective tokens on AIME24 from 2.54 to 0.39 and boosted accuracy from 20.67% to 40.00%, without impacting "think"-mode performance. These results suggest that controllable hybrid thinking is fundamentally an architectural problem, effectively addressed by separating mode-specific feed-forward pathways.