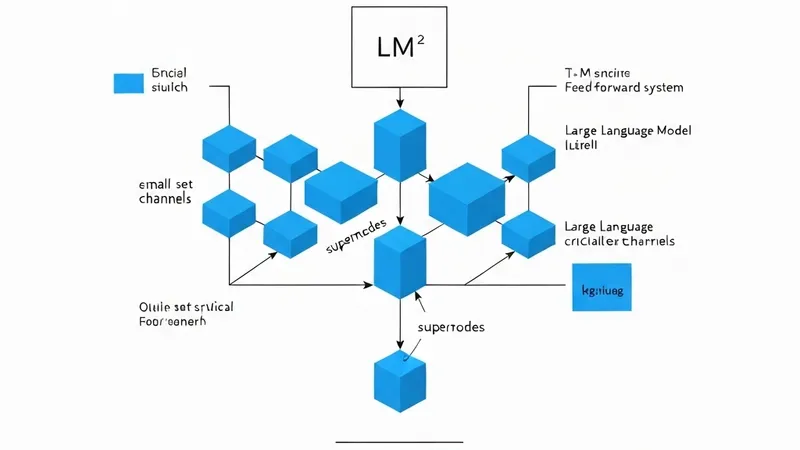

New research has delved into the organization of channel-level importance within transformer feed-forward networks (FFNs), identifying crucial "supernodes" and their significance in model pruning.

Utilizing a Fisher-style loss proxy (LP) based on activation-gradient second moments, researchers found that loss sensitivity is concentrated in a small set of channels within each LLM FFN layer. For instance, in Llama-3.1-8B, the top 1% of channels per layer account for a median of 58.7% of the LP mass, with a range of 33.0% to 86.1%. These loss-critical channels have been termed "supernodes."

Importantly, these LP-defined supernodes overlap only weakly with activation-defined outliers and cannot be solely explained by activation power or weight norms. Around this core, the study also identified a weaker but consistent "halo structure," where some non-supernode channels share the supernodes' write support and exhibit stronger redundancy with the protected core.

To diagnose this organization, a one-shot structured FFN pruning test was conducted. Baselines that pruned many supernodes degraded sharply at 50% FFN sparsity. In contrast, SCAR variants, which explicitly protect the supernode core, demonstrated superior robustness. The strongest variant, SCAR-Prot, achieved a perplexity of 54.8, significantly outperforming baseline methods like Wanda-channel, which yielded 989.2.

This LP-concentration pattern is not isolated; it appears across Mistral-7B, Llama-2-7B, and Qwen2-7B, remains visible in targeted Llama-3.1-70B experiments, and even increases during OLMo-2-7B pretraining. These results collectively suggest that LLM FFNs develop a small learned core of loss-critical channels, and preserving this core is paramount for reliable structured pruning.