Training reinforcement learning (RL) systems, particularly in large language model (LLM) post-training, faces significant hurdles due to noisy supervision and poor out-of-domain (OOD) generalization. While recent distributional RL methods aim to boost robustness by modeling values through multiple quantile points, they often learn each quantile independently as a scalar. This limitation results in coarse-grained value representations that struggle to incorporate fine-grained state information, especially under complex and OOD conditions.

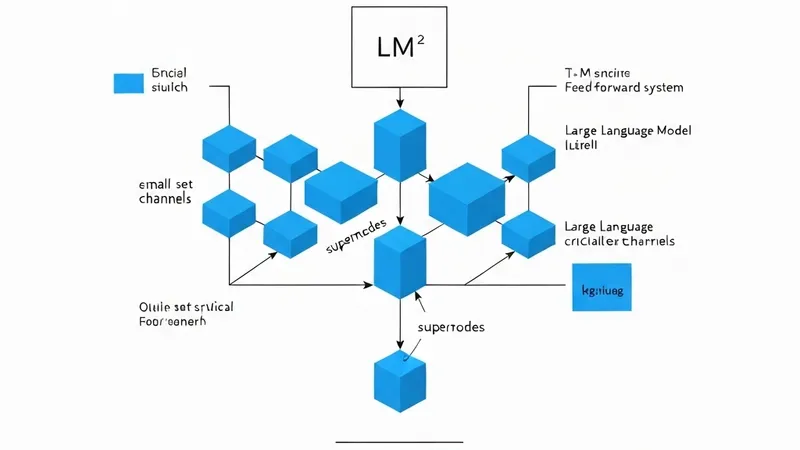

To address these challenges, a new robust distributional RL framework named DFPO (Distributional Value Flow Policy Optimization with Conditional Risk and Consistency Control) has been proposed. DFPO innovatively models values as continuous flows across time steps, effectively scaling value modeling by learning a value flow field instead of relying on isolated quantile predictions. This approach allows DFPO to capture richer state information, leading to more accurate advantage estimation. Furthermore, to stabilize training amidst noisy feedback, DFPO incorporates conditional risk control and consistency constraints along its value flow trajectories. Experimental results across dialogue, math reasoning, and scientific tasks demonstrate that DFPO significantly outperforms established baselines such as PPO and FlowRL under noisy supervision, achieving marked improvements in training stability and generalization capabilities.