Understanding how policies are debated and justified in parliament is a fundamental aspect of the democratic process. However, the sheer volume and inherent complexity of these debates often make it difficult for outside audiences to engage effectively.

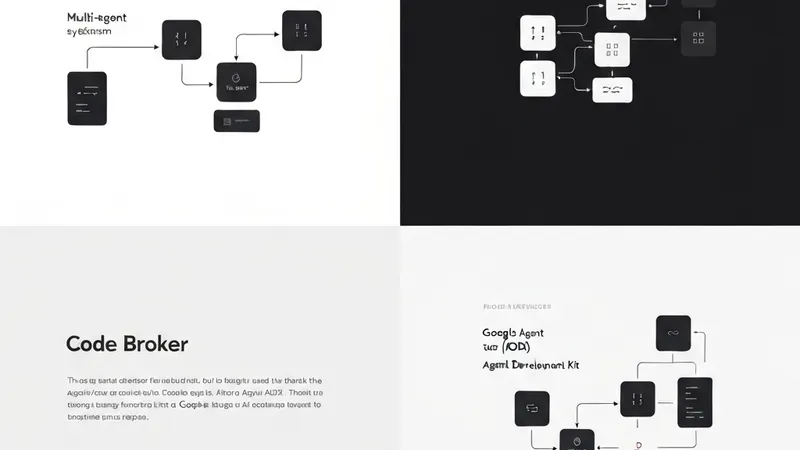

In parallel, Large Language Models (LLMs) have demonstrated significant capabilities in enabling automated summarization at scale. While such summaries can undeniably enhance the accessibility of parliamentary procedures, a critical challenge persists: evaluating whether these LLM-generated summaries faithfully communicate the original argumentative content. Existing automated summarization metrics have frequently shown poor correlation with human judgments regarding consistency, which refers to the faithfulness or alignment between the summary and its source.

To address this, researchers have proposed a novel formal framework designed for evaluating parliamentary debate summaries. This framework grounds argument structures directly within the contested proposals under debate. The approach, powered by computational argumentation, strategically focuses its evaluation on the formal properties related to the faithful preservation of the reasoning presented to justify or oppose specific policy outcomes.

The efficacy of this methodology was demonstrated through a comprehensive case study involving debates from the European Parliament and their associated LLM-driven summaries, offering a promising path for more robust and reliable evaluation of LLM performance in complex argumentative text summarization.