Large language model (LLM) agents receive instructions from numerous sources—system messages, user prompts, tool outputs, and other agents—each carrying distinct levels of trust and authority. When these instructions conflict, agents must reliably prioritize and follow the highest-privilege instruction to maintain safety and effectiveness.

The prevailing "instruction hierarchy (IH)" paradigm assumes a fixed, small set of privilege levels (typically fewer than five), defined by rigid role labels such as "system > user." This approach proves inadequate for real-world agentic settings, however, where conflicts can arise from a far greater variety of sources and contexts.

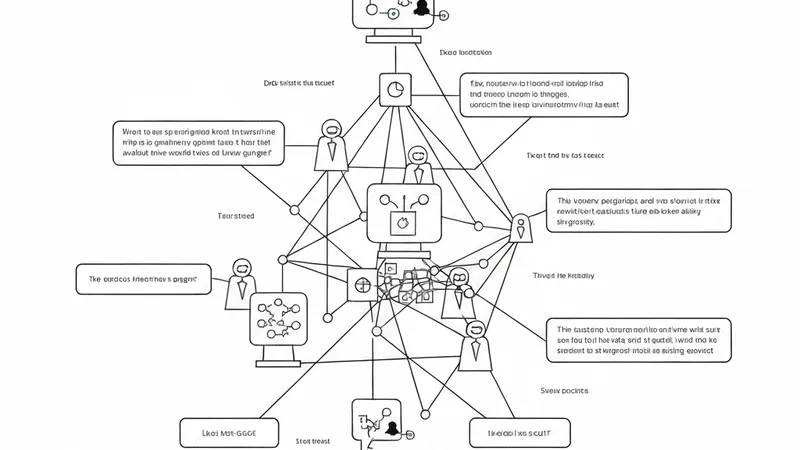

To address this limitation, new research introduces the Many-Tier Instruction Hierarchy (ManyIH), a paradigm designed to resolve instruction conflicts among instructions possessing an arbitrarily large number of privilege levels.

Complementing this, the work also introduces ManyIH-Bench, the first benchmark specifically for ManyIH. ManyIH-Bench challenges models to navigate up to 12 levels of conflicting instructions with varying privileges. It comprises 853 agentic tasks, split into 427 coding tasks and 426 instruction-following tasks.

The benchmark generates realistic and difficult test cases by composing constraints developed by LLMs and subsequently verified by humans, covering 46 real-world agents. Experimental results indicate that even current frontier models perform poorly, achieving approximately 40% accuracy, when instruction conflict scales in complexity.

This research underscores the critical and urgent need for new methods explicitly focused on fine-grained and scalable instruction conflict resolution within agentic environments.