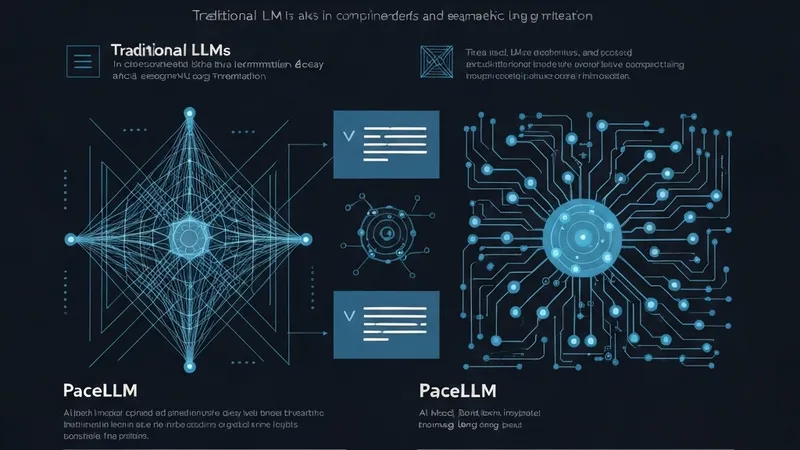

While Large Language Models (LLMs) demonstrate strong performance across domains, their long-context capabilities are significantly limited by two key issues: transient neural activations causing information decay, and unstructured feed-forward network (FFN) weights leading to semantic fragmentation.

Inspired by the brain's working memory and cortical modularity, PaceLLM introduces two primary innovations. First, a Persistent Activity (PA) Mechanism mimics the persistent firing of prefrontal cortex (PFC) neurons by employing an activation-level memory bank. This bank dynamically retrieves, reuses, and updates critical FFN states, effectively addressing contextual decay. Second, Cortical Expert (CE) Clustering emulates task-adaptive neural specialization, reorganizing FFN weights into semantic modules. This establishes robust cross-token dependencies and mitigates semantic fragmentation.

Extensive evaluations confirm PaceLLM's effectiveness, showing a 6% improvement on LongBench's Multi-document QA tasks and performance gains of 12.5% to 17.5% on Infinite-Bench tasks. Crucially, in Needle-In-A-Haystack (NIAH) tests, PaceLLM extended its measurable context length to an impressive 200,000 tokens. This pioneering work in brain-inspired LLM optimization is complementary to existing approaches, generalizable to any model, and enhances long-context performance and interpretability without requiring structural overhauls.