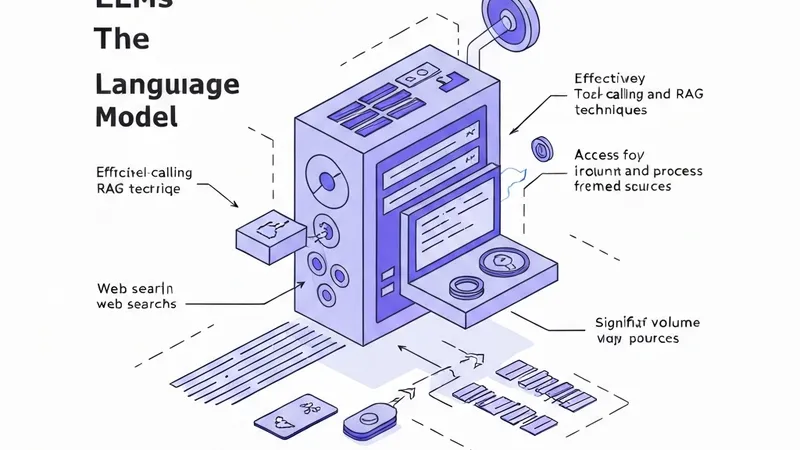

Large language models (LLMs) are now routinely deployed for autonomous execution of complex tasks, ranging from natural language processing to dynamic workflows like web searches. The integration of tool-calling and Retrieval Augmented Generation (RAG) significantly enhances LLMs' ability to process and retrieve sensitive corporate data, concurrently amplifying both their functionality and their vulnerability to exploitation.

As LLMs increasingly interact with external data sources, indirect prompt injection has emerged as a critical and evolving attack vector. This method allows adversaries to compromise models through manipulated inputs, potentially leading to data exfiltration. To thoroughly investigate this threat, a systematic evaluation of indirect prompt injection attacks was conducted across diverse models, analyzing their susceptibility to such attacks.

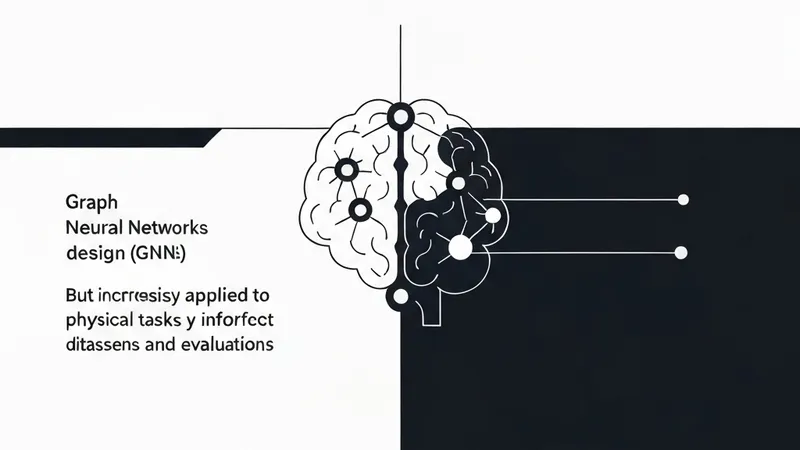

The research specifically examined parameters influencing vulnerability, including model size, manufacturer, and specific implementations, while also identifying the most effective attack methodologies. The findings are concerning: even well-known attack patterns continue to succeed, exposing persistent weaknesses within current model defenses.

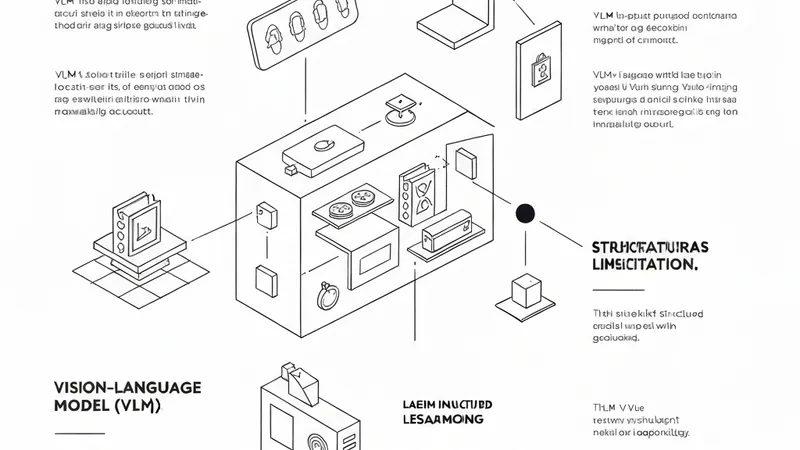

To mitigate these vulnerabilities, the study underscores the necessity for several key interventions: firstly, strengthening training procedures to enhance inherent model resilience; secondly, establishing a centralized database of known attack vectors to facilitate proactive defense; and thirdly, implementing a unified testing framework to ensure continuous security validation. These measures are crucial for driving developers to integrate security into the core design of LLMs, as current models have demonstrated an inability to effectively mitigate long-standing threats.