Modern large language models (LLMs) are no longer exclusively trained on raw internet text. Increasingly, companies are leveraging powerful “teacher” models to train smaller, more efficient “student” models. This process, broadly known as LLM distillation or model-to-model training, has become a pivotal technique for building high-performing models at lower computational costs.

For instance, Meta utilized its massive Llama 4 Behemoth model to assist in training Llama 4 Scout and Maverick. Similarly, Google leveraged Gemini models during the development of Gemma 2 and Gemma 3, and DeepSeek distilled reasoning capabilities from DeepSeek-R1 into smaller Qwen and Llama-based models.

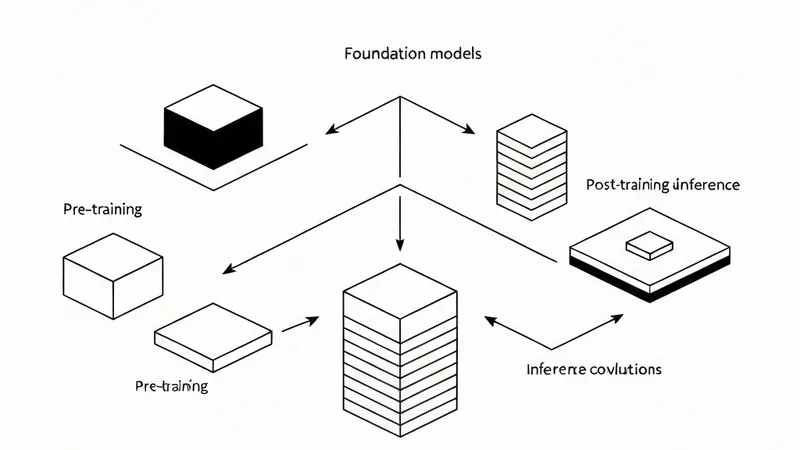

The core concept is straightforward: instead of solely learning from human-written text, a student model can also learn from the outputs, probability distributions, reasoning traces, or behaviors of another LLM. This enables smaller models to inherit sophisticated capabilities such as reasoning, instruction following, and structured generation from much larger systems. Distillation can occur during pre-training, where teacher and student models are trained collaboratively, or during post-training, where a fully trained teacher transfers knowledge to a separate student model.

This article will explore three major approaches employed for training one LLM using another: Soft-label distillation, where the student learns from the teacher’s output probability distributions; Hard-label distillation, where the student imitates the teacher’s specific generated outputs; and Co-distillation, where multiple models learn collaboratively by sharing predictions and behaviors during training.

Soft-Label Distillation

Soft-label distillation is a training technique where a smaller student LLM learns by imitating the output probability distribution of a larger teacher LLM. Instead of being trained solely on predicting the single correct next token, the student is trained to match the teacher’s softmax probabilities across the entire vocabulary. For example, if the teacher predicts the next token with probabilities such as “cat” = 70%, “dog” = 20%, and “animal” = 10%, the student learns not just the final answer, but also the nuanced relationships and uncertainty between different tokens. This richer signal is often referred to as the teacher’s “dark knowledge” because it contains hidden information about reasoning patterns and semantic understanding.

The primary advantage of soft-label distillation is that it allows smaller models to inherit advanced capabilities from much larger models, while remaining faster and cheaper to deploy. Since the student learns from the teacher’s full probability distribution, training becomes more stable and informative compared to learning from hard one-word targets alone. However, this method also presents practical challenges. To generate soft labels, access to the teacher model’s logits or weights is often required, which is typically not feasible with closed-source models. Furthermore, storing probability distributions for every token across vocabularies containing 100k+ tokens can incur significant storage costs.