Recent advancements in large language models (LLMs) have significantly fueled interest in developing fully autonomous AI agents. However, these autonomous LLM-based agents continue to confront substantial real-world challenges. Key limitations include diminished reliability stemming from "hallucinations," difficulties in executing complex tasks, and considerable safety and ethical risks, all of which hinder their practical feasibility and trustworthiness.

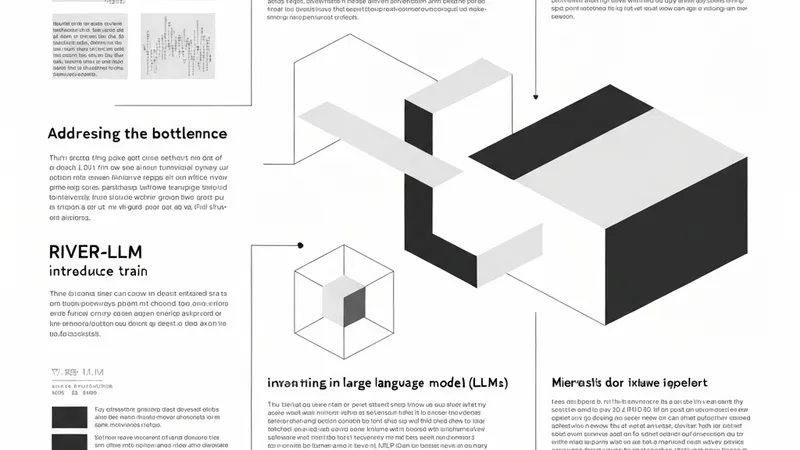

To address these inherent limitations, researchers are increasingly focusing on LLM-based human-agent systems (LLM-HAS). These systems are designed to integrate human-provided information, feedback, or control directly into the agent architecture. This integration aims to significantly bolster overall system performance, reliability, and safety. The fundamental premise of LLM-HAS is to facilitate effective collaboration by leveraging the complementary strengths of both human intelligence and LLM-powered agents.

A new, comprehensive and structured survey, recently accepted by ACL 2026 (Findings), provides the first in-depth overview of LLM-HAS. This pivotal paper clarifies fundamental concepts within the field and systematically presents the core components that define these systems. These components encompass environment and profiling, mechanisms for human feedback, various interaction types, orchestration strategies, and communication protocols.

Furthermore, the survey delves into emerging applications of LLM-HAS and critically examines the unique challenges and opportunities inherent in human-AI collaboration. By consolidating current knowledge and offering a structured perspective, this research aims to stimulate further innovation and drive future studies within this rapidly evolving and interdisciplinary domain.