While Google's Gemini AI models have seen significant advancements, their usage remains subject to Google's proprietary terms. The Gemma open-weight models offer greater flexibility, and the over-a-year-old Gemma 3 is now succeeded by Gemma 4. Developers can begin working with Gemma 4 today, which introduces four sizes optimized for local deployment. Responding to developer feedback regarding AI licensing, Google has also transitioned from its custom Gemma license to Apache 2.0.

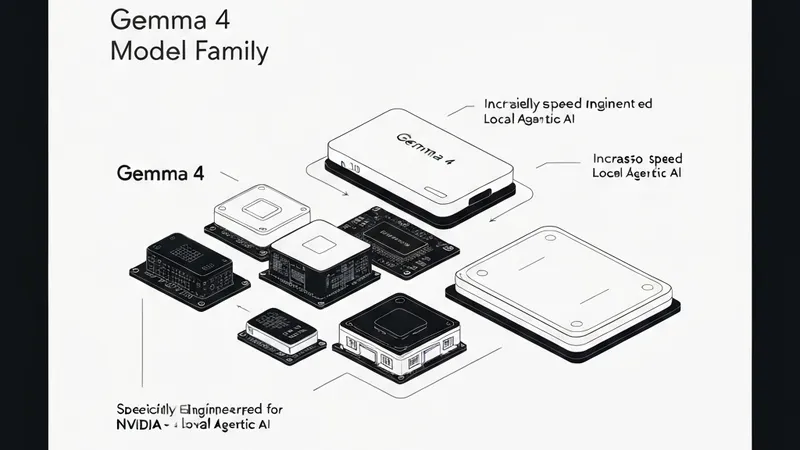

Consistent with prior open-weight versions, Gemma 4 is engineered for local machine execution. The two larger Gemma variants, a 26B Mixture of Experts (MoE) and a 31B Dense model, are designed to run unquantized in bfloat16 format on a single 80GB Nvidia H100 GPU. While an H100 represents a substantial investment, these larger models can be quantized to lower precision, enabling deployment on consumer-grade GPUs.

Google emphasizes its focus on reducing latency to maximize the advantages of Gemma's local processing. The 26B Mixture of Experts model notably activates only 3.8 billion of its 26 billion parameters during inference, leading to significantly higher tokens-per-second throughput compared to similarly sized models. The 31B Dense model, conversely, prioritizes quality, with Google anticipating developers will fine-tune it for specific applications.

The two smaller Gemma 4 models, Effective 2B (E2B) and Effective 4B (E4B), are specifically designed for mobile devices and edge computing. These options aim for low memory usage during inference, operating effectively at 2 billion or 4 billion parameters. Google states that its Pixel team collaborated closely with Qualcomm and MediaTek to optimize these models for platforms such as smartphones, Raspberry Pi, and Jetson Nano, promising reduced memory and battery consumption compared to Gemma 3, alongside “near-zero latency.”

All new Gemma 4 models are reported to significantly outperform Gemma 3, with Google asserting they are the most capable models deployable on local hardware. The Gemma 31B model is projected to debut at number three on the Arena list of top open AI models, trailing only GLM-5 and Kimi 2.5. Importantly, even the largest Gemma 4 variant is considerably smaller than these leading competitors, theoretically offering a more cost-effective operational footprint.