The landscape of modern AI is rapidly shifting from total reliance on massive, generalized cloud models to an era of local, agentic AI powered by platforms like OpenClaw. The potential for generative AI on local hardware is significant, whether deploying a vision-enabled assistant on an edge device or building an always-on agent that automates complex coding workflows.

However, developers consistently face a bottleneck and a substantial hidden financial burden: the “Token Tax.” The challenge lies in enabling AI to constantly process multimodal inputs rapidly and reliably without accumulating astronomical cloud computing bills for every token generated.

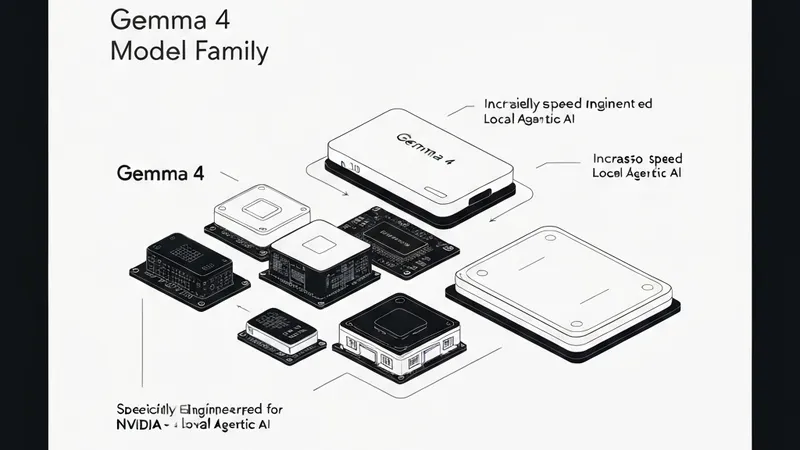

The solution to entirely eliminating API costs is the new Google Gemma 4 family, with NVIDIA GPUs serving as the optimal hardware platform of choice.

Google’s latest additions to the Gemma 4 family introduce small, fast, and omni-capable models explicitly built for efficient local execution across a wide range of devices. Optimized in collaboration with NVIDIA, these models scale effortlessly from Jetson Orin Nano edge AI modules to GeForce RTX PCs, workstations, and the DGX Spark personal AI supercomputer.

The Agentic AI Paradigm

The Gemma 4 family acts as a high-performance engine for local AI agents. Spanning E2B, E4B, 26B, and 31B variants, these models are designed for efficient deployment anywhere. They natively support structured tool use (function calling) for agents and offer interleaved multimodal inputs, allowing developers to mix text and images in any order within a single prompt.

Depending on hardware and goals, developers generally utilize one of two main tiers:

1. Ultra-Efficient Edge Models (E2B and E4B)

- The Tech: Gemma 4 E2B and E4B.

- How it Works: These models are built for ultra-efficient, low-latency inference at the edge. They operate completely offline with near-zero latency and zero API fees.

- Best For: IoT devices, robotics, and localized sensor networks.

- Hardware Needed: Devices including NVIDIA Jetson Orin Nano modules.

2. High-Performance Agentic Models (26B and 31B)

- The Tech: Gemma 4 26B and 31B.

- How it Works: These variants are designed specifically for high-performance reasoning and developer-centric workflows.

- Best For: Complex problem-solving, code generation, and running agentic AI.

- Hardware Needed: NVIDIA RTX GPUs, workstations, and DGX Spark systems.