Google's newly released open-source model, Gemma 4, performs text, image, and audio processing entirely on-device. Utilizing agent skills, this AI can independently access tools such as Wikipedia or interactive maps without requiring cloud connectivity.

The Google AI Edge Gallery app, which is necessary to run Gemma 4, is available for free on both Android and iOS. Following its release, the app quickly ascended to fourth place among the most-downloaded free productivity apps in the iOS App Store, positioned behind Claude, Gemini, and ChatGPT.

Gemma 4 is built upon the same research as Google's proprietary Gemini 3 model and is released under the commercially permissive Apache 2.0 license. Google reports that the Gemma family has accumulated over 400 million downloads since its initial generation. All models support multimodal processing—text, images, and audio—across more than 140 languages.

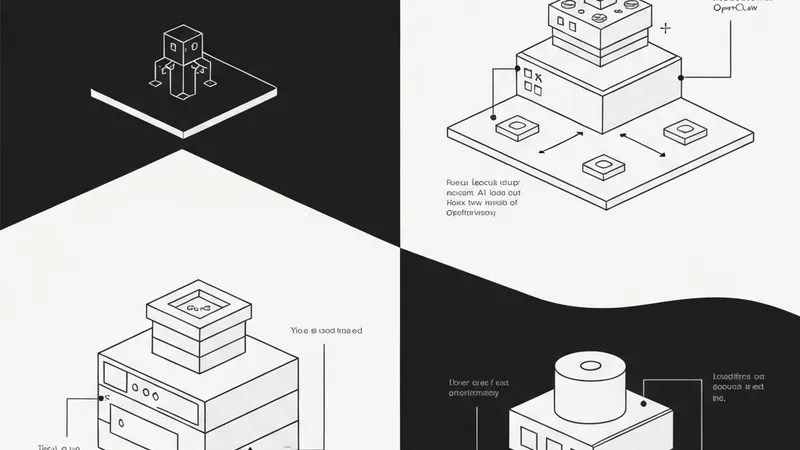

The latest Gemma 4 release features four distinct model variants, catering to devices ranging from smartphones to servers. The E2B and E4B versions are specifically engineered for smartphones. The "E" denotes "effective parameters," referring to the number of parameters actively engaged during inference. In its quantized form, E2B occupies approximately 1.3 GB on-device storage, while E4B requires about 2.5 GB.

Larger variants, the 26B and 31B models, are designed for servers and high-performance hardware. The 26B version incorporates a mixture-of-experts (MoE) architecture with 128 experts, where only 3.8 billion parameters are active at any given moment. The dense 31B model provides an extensive context window of up to 256,000 tokens.

Google collaborated with Arm and Qualcomm to optimize the smartphone variants for current mobile chipsets. According to Google, Gemma 4 on Android devices operates up to four times faster than its predecessor, while simultaneously reducing battery consumption by up to 60 percent. Arm's own benchmarks indicate even greater improvements: an average 5.5x speedup in processing, contingent on the device featuring a newer Arm chip equipped with the SME2 instruction set, an extension that accelerates matrix computations for AI models directly in silicon.

The app requires Android 12 or iOS 17. The two phone-optimized Gemma 4 variants have differing RAM requirements: E2B, with its 1.3 GB quantized size, operates on devices with 6 GB of RAM, whereas E4B, requiring about 2.5 GB of model memory, needs at least 8 GB of RAM.

Agent skills within the app can be individually toggled and managed. For instance, Gemma 4 can generate a QR code directly on-device using a JavaScript skill. Beyond foundational chat, image recognition, and audio transcription, the app integrates "agent skills" such as Wikipedia search, interactive maps, auto-generated summaries, and flashcards. Gemma 4 is also capable of describing photos, converting spoken input into diagrams and visualizations, and collaborating with other local models for tasks like text-to-speech or image generation. A demo skill showcasing the description and playback of animal calls illustrates this interoperability.

Google also states that image recognition capabilities have received a substantial upgrade. Optical Character Recognition (OCR) tasks, involving the extraction of text from images, diagrams, or handwriting, now yield notably improved results. The model’s handling of time-related information has also been enhanced.