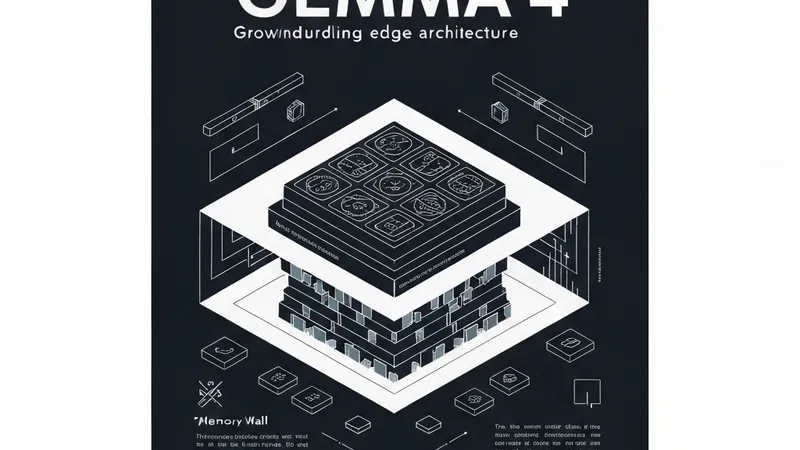

As a systems engineer focused on high-performance data ingestion, the most compelling aspect of Gemma 4 isn't merely its benchmarks—it's the ingenious way it physically manages memory. Most open models inevitably hit a "Memory Wall" when dealing with high context. For a standard Transformer, the Key-Value (KV) cache grows linearly, eventually consuming more VRAM than the model weights themselves. Gemma 4 ingeniously addresses this through a "Divergent Architecture" that distinctly separates its "Edge" models (E2B/E4B) from its "Server" models (31B Dense).

1. Per-Layer Embeddings (PLE)

The E2B variant exemplifies a sophisticated trade-off between memory and compute. It employs Per-Layer Embeddings (PLE), where a secondary embedding signal is fed into every decoder layer. By allocating nearly 46% of its parameter budget to these specialized lookup tables, Gemma 4 effectively prevents token identity collision within the narrow hidden states inherent to 2B-scale models. This design allows the model to maintain "representational depth" without demanding the massive DRAM footprint typically associated with 7B or 14B models.

2. The 128K Context Architecture

To achieve a 128K context window locally, Gemma 4 utilizes an innovative Alternating Attention mechanism:

- Local Sliding-Window Attention: Handles 512-token spans, optimized for high-speed local processing.

- Global Full-Context Attention: Interleaved at a 5:1 ratio with the local attention to ensure long-range reasoning capabilities are maintained.

This hybrid approach, coupled with an 8:1 Grouped-Query Attention (GQA) strategy, dramatically reduces the VRAM requirement. A 128K context window that would traditionally demand 24GB+ of VRAM can now run efficiently on consumer hardware with only approximately 3-4GB of overhead.

Hardware Observations: Local Linux Environment

I conducted tests on the Gemma 4 E2B (4-bit quantized) in a local Linux development environment (Ubuntu) using an Acer laptop. Key performance metrics observed include:

- Model Load Time: Approximately 1.8 seconds (using Ollama/GGUF).

- Peak VRAM (32K Context): 2.6 GB.

- Tokens Per Second: Approximately 42 tokens/sec (during decoding).

For systems like forge-core, where optimizing mmap-based data ingestion is critical, this low-latency local inference capability allows for real-time schema reasoning without the typical round-trip delays associated with API calls.

Conclusion

Gemma 4 unequivocally demonstrates that the future of local AI isn't simply about scaling up models. Instead, it lies in engineering specialized architectures that meticulously exploit the exact physics and capabilities of the underlying hardware. The "Divergent" approach is precisely what the open-source community needs to liberate itself from the dependency on massive, centralized server clusters.