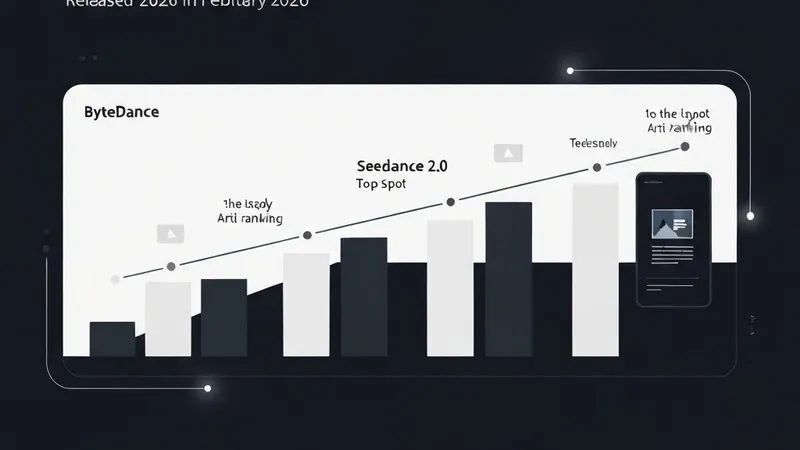

In February 2026, ByteDance launched Seedance 2.0, its advanced AI video model. Within weeks of its release, Seedance 2.0 secured the #1 position on the Artificial Analysis text-to-video leaderboard, outperforming prominent models such as Google Veo 3, OpenAI Sora 2, and Runway Gen-4.5 in blind human evaluations.

While Seedance 2.0 has garnered significant international attention, users outside China often encounter difficulties accessing ByteDance's ecosystem products like Dreamina or VolcEngine, primarily due to requirements such as a Chinese phone number for registration. This deep dive aims to explore the technical architecture of Seedance 2.0, highlighting its joint audio-video generation as a major breakthrough, and to provide an objective assessment of its capabilities and limitations.

Key findings from our analysis of Seedance 2.0 include:

- Joint Audio-Video Generation: A critical innovation, this feature enables Seedance 2.0 to produce the most natural lip synchronization among current models by generating audio and video simultaneously, significantly enhancing realism.

- Multi-Reference Input: The model supports up to 12 input reference files, offering creators director-level control over the generated video's style, actions, and intricate details.

- Resolution Constraint: Currently, Seedance 2.0 has a maximum output resolution of 2K. This presents a limitation when compared to other models like Kling 3.0, which offers 4K resolution at 60 frames per second.

- Cost Efficiency: Generating a 15-second video clip costs approximately $0.14, making it 5-10 times cheaper than competing solutions and significantly lowering the barrier to access high-quality AI video generation.

- CapCut Integration: Seedance 2.0's integration with CapCut provides it with the largest distribution platform of any AI video model, ensuring broad accessibility and user adoption.

These features position Seedance 2.0 as a new benchmark in text-to-video generation, particularly for its advancements in audio-video synchronization and cost-effectiveness.