Anthropic has once again faced an internal protocol lapse, inadvertently publishing internal source code for its AI coding assistant, Claude Code. This incident follows a previous revelation of unannounced model details due to publicly visible data caches. The leak provides an unprecedented look into Anthropic’s closed-source model at a critical time, as the company prepares for an initial public offering (IPO).

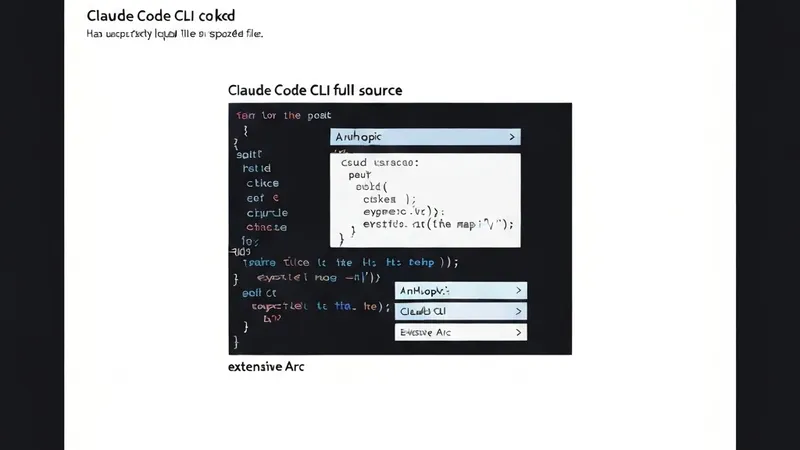

The code was discovered by Chaofan Shou, an intern at Solayer Lab, within a .map file found in an npm registry. A .map file, typically generated during software compilation, details the memory map of a project and is usually in plaintext. Although intended for internal debugging—essentially acting as a decoder to recompile obfuscated code for developers—Anthropic's publication exposed at least partial, unobfuscated TypeScript source code for Claude Code version 2.1.88. The file contained approximately 512,000 lines of code related to Anthropic’s coding agent.

Developers parsing the leaked code have reported findings ranging from “spinner verbs” or phrases Claude uses while processing tasks, to details on how swearing at Claude affects its prompt reception. One person even claimed to have found a hidden “Tamagotchi” style virtual pet, though this was reportedly set for an April 1 launch, suggesting it might have been an April Fool’s bit. The file also reveals extensive information on Claude’s operational mechanics, including its engine for API calls and methods for counting tokens used in prompt processing. While the code does not appear to contain details about Anthropic’s underlying model, all content from the file has been uploaded to a GitHub repository for public interaction and analysis.

Anthropic declined specific comments on user discoveries but confirmed the authenticity of the leaked source code to Gizmodo. A company spokesperson stated, “Earlier today, a Claude Code release included some internal source code. No sensitive customer data or credentials were involved or exposed. This was a release packaging issue caused by human error, not a security breach. We’re rolling out measures to prevent this from happening again.” The incident highlights potential internal dependencies on AI tools, as Boris Cherny, Anthropic’s head of Claude Code, noted in December that 100% of his contributions to Claude Code over a thirty-day period were written by the coding agent itself.