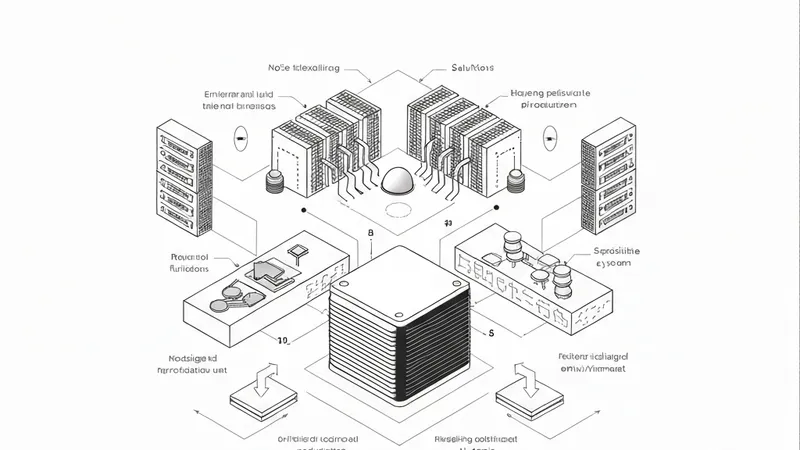

Previous arguments have posited that reliably deploying autonomous AI agents is not primarily a model problem, but an environment problem. The key lies in "harness engineering": the discipline of building structured workflows, validation loops, and governance mechanisms *around* the model rather than inside it. This principle suggests that organizations prioritizing the surrounding environment as their primary engineering target outperform those solely chasing better models, and it applies across all domains where agents handle complex, consequential work.

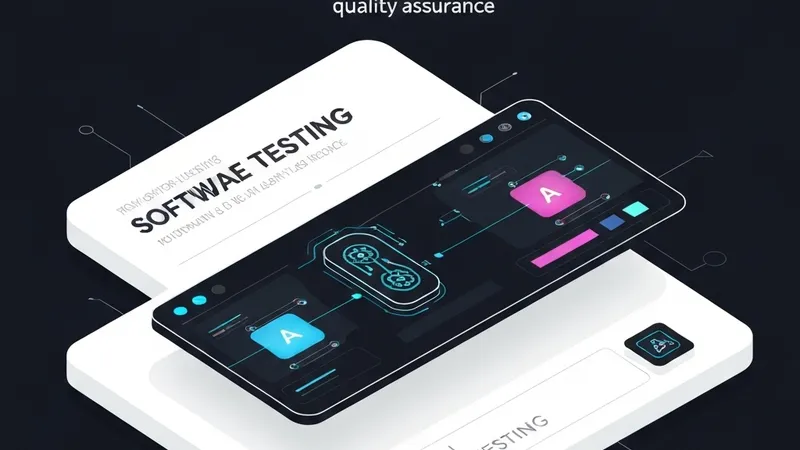

This argument immediately raises a practical question: how does one truly know if an agent is ready for such work? Most existing evaluations still measure narrow, low-friction tasks in controlled or synthetic environments. While they can indicate if a model produces a plausible answer or completes a neat subtask, they reveal little about an agent's ability to maintain coherence across a long workflow, adapt when issues arise, and successfully complete a job that actually runs. Many benchmarks have become so simple that top models are already nearing perfect scores, offering no meaningful signal about which systems can handle real-world tasks.

Production environments typically demand sustained execution under friction: long chains of interdependent actions, genuine error recovery, and the application of deep domain knowledge to messy, open-ended objectives. This represents a fundamentally different test than what most benchmarks are designed to measure. The commercial imperative to bridge this gap is no longer theoretical. Terminal-based coding agents alone are already generating billions in revenue, making accurate measurement of these systems' capabilities and limitations in realistic conditions a commercial necessity for anyone developing, deploying, or investing in AI agent products.

Among production-ready autonomous agents, some of the most capable are currently concentrated in coding and software engineering. This is logical because the terminal environment provides clear success criteria and immediate feedback. An agent cannot conceal its shortcomings behind fluent responses when a build fails, a dependency breaks, or a command returns an incorrect output. It must continue working until the task is genuinely completed.

Terminal Bench was designed based on this reality. It places agents within real terminal environments, pre-loaded with the necessary files, packages, and system configurations for the task. Each problem includes an instruction, a verification script, and a reference solution. The evaluation focuses not on whether the agent followed a preferred sequence of steps, but on whether it achieved a machine-checkable result. There is no partial credit for appearing competent; the output either works or it does not.