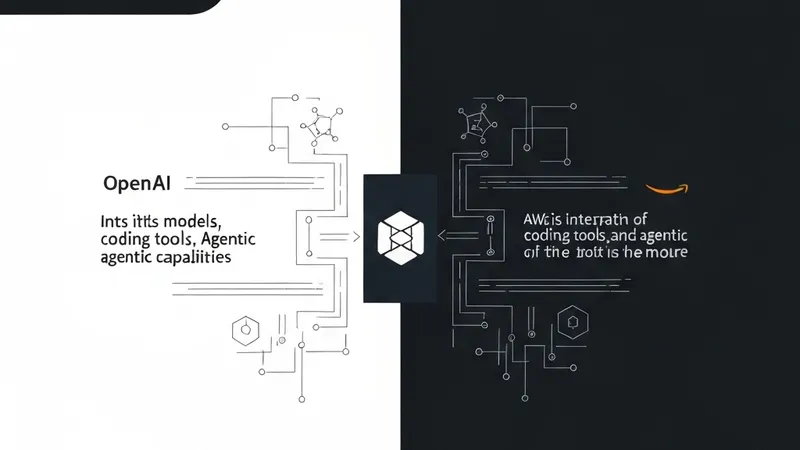

OpenAI has quickly moved to broaden its cloud strategy since announcing changes to its partnership with Microsoft. The creator of ChatGPT is now bringing its models, coding tools, and agentic capabilities to Amazon Web Services (AWS), launching a new set of integrations on Bedrock, AWS’s platform for building and deploying AI applications.

For AWS customers, this means direct access to OpenAI’s models and tools within their existing cloud environments, eliminating the need to rely on external APIs or Azure-based services. This integration also positions OpenAI’s technology alongside rival models already available on Bedrock, such as those from Anthropic, offering enterprises enhanced flexibility in building and deploying AI systems.

The seven-year journey of Microsoft and OpenAI has been marked by numerous breakthroughs and points of contention, with both companies ultimately seeking greater flexibility and autonomy. While the primary beneficiaries of this newfound maneuvering room are still being debated, AWS is strategically positioned to capitalize on this shift.

The history of this significant collaboration dates back to 2019 when Microsoft invested $1 billion in the then-nascent lab and became its exclusive cloud provider. This deal granted Microsoft early access to OpenAI’s models and anchored OpenAI’s training and deployment stack on Azure. Subsequent investments deepened this relationship, including a major 2023 deal that reportedly brought Microsoft's total investment to $13 billion and secured a significant minority stake, believed to be just under 50%, in OpenAI’s for-profit arm.

However, the partnership has also faced periods of instability. In November 2023, OpenAI’s board abruptly ousted CEO Sam Altman, leading Microsoft to hire him. Days later, Altman returned to OpenAI following internal backlash.

Though this incident exposed underlying fault lines, it did not alter the core incentives binding the two companies. For Microsoft, it provided a crucial entry into the emerging large language model (LLM) market, at a time when it lacked a comparable in-house offering. For OpenAI, the partnership delivered the essential compute, capital, and distribution channels needed to train increasingly large systems and reach enterprise customers.

Yet, this close coupling came with trade-offs. As OpenAI's ambitions expanded, so did its infrastructure demands, placing increasing strain on Azure's capacity and tying the company to a single cloud provider. This occurred even as enterprises were increasingly adopting multi-cloud strategies. By mid-2025, reports indicated that OpenAI had already begun diversifying its compute resources, striking deals with Google Cloud, CoreWeave, and Oracle to supplement its needs – a clear sign of growing cracks in the exclusive provider setup.

Microsoft itself alluded to this pressure during its Fiscal Year 2026 Q2 earnings call in January, stating that "Our customer demand continues to exceed our supply."