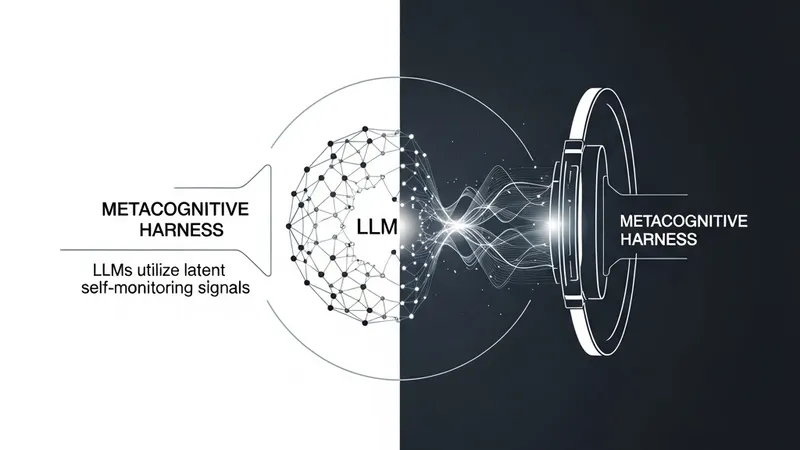

Large language models (LLMs) frequently exhibit useful self-monitoring signals: they can estimate their likelihood of success before solving a problem and judge the correctness of their answers afterward. However, these signals are typically measured or elicited in isolation rather than being used to control active inference. In this work, researchers explore whether LLMs possess latent metacognitive abilities that can be transformed into effective test-time control mechanisms.

Inspired by the Nelson-Narens theory from cognitive psychology, the study proposes a "metacognitive harness" that separates monitoring from reasoning. For each problem, the model first reports a pre-solve Feeling-of-knowing (FOK) signal. Following each attempt, it reports a post-solve Judgment-of-learning (JOL) signal. Rather than treating these as passive confidence scores, the harness utilizes them as an explicit control interface for the reasoning process.

The harness operates by deciding when to trust the current solution, when to retry the task with compact metacognitive feedback, and when to pass multiple attempts to a final aggregator. This structured approach allows the model to act upon its internal state to optimize output quality during inference.

Across text, code, and multimodal reasoning benchmarks, the harness substantially improved a fixed Claude Sonnet-4.6 base model without requiring any parameter updates or benchmark-specific fine-tuning. On evaluated public benchmark snapshots, the system raised pooled accuracy from 48.3 to 56.9, exceeding the strongest listed leaderboard entries on three primary evaluation settings: HLE-Verified, LiveCodeBench v6, and R-Bench-V.

These results suggest that strong LLMs may already possess sophisticated latent metacognitive abilities but require an explicit control harness to act on them effectively during reasoning. This provides a promising framework for test-time scaling by focusing on the model's ability to monitor and regulate its own cognitive processes.