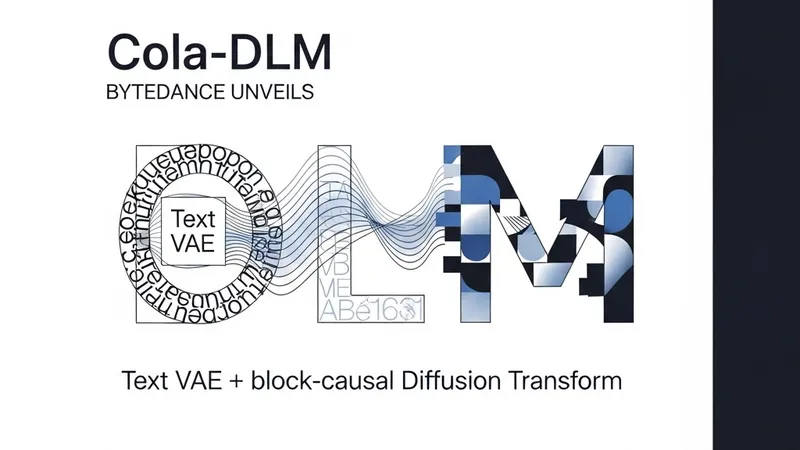

ByteDance has officially introduced Cola-DLM, a pioneering framework in the realm of Continuous Latent Diffusion Language Models. This novel architecture presents an innovative approach to generative AI by unifying a Text Variational Autoencoder (VAE) with a block-causal Diffusion Transformer (DiT). This integration allows the model to map discrete text into continuous latent sequences, offering a robust alternative to traditional autoregressive paradigms.

A standout feature of Cola-DLM is its utilization of Flow Matching for latent prior transport. This technique enhances the model's ability to navigate the complex probability distributions of latent spaces, ensuring high-fidelity text generation and improved scalability. The research demonstrates that by operating in a continuous domain, the framework can capture more nuanced semantic relationships compared to standard discrete-token models.

The methodology employs a sophisticated two-stage training process: an initial phase focused on Text VAE pretraining to establish a latent foundation, followed by joint training facilitated by Flow Matching. ByteDance has released the entire framework under the Apache 2.0 license. Built on top of PyTorch and the HuggingFace Transformers library, Cola-DLM is designed for high compatibility and ease of use, providing the global research community with immediate access to cutting-edge diffusion-based text generation tools.