Ever found yourself three weeks into a project, realizing you chose the wrong Large Language Model (LLM)? Avoiding such a scenario is paramount in production environments. The debate between Claude and GPT isn't about inherent superiority, but rather which model best addresses your specific problems without escalating costs or hitting rate limits at critical junctures.

The Context Window Game Changer

Claude 3.5 Sonnet boasts an impressive 200K token context window. In contrast, OpenAI's GPT-4 Turbo offers up to 128K, with the base GPT-4 at 8K. For real-world production tasks—such as processing entire codebases, comprehensive document analysis, or maintaining extensive conversation history across complex workflows—this difference is far from academic.

If your project involves building a code review agent or a documentation system that necessitates understanding an entire codebase simultaneously, Claude's expansive context window is a genuine game-changer. GPT-4's smaller window often requires constant text chunking and summarization, introducing latency and potential information loss, which can be detrimental in high-stakes applications.

Where GPT Still Excels

Despite Claude's advancements, GPT-4's reasoning capabilities remain dominant for complex, multi-step problems. Having been trained on more diverse instruction-following datasets, GPT-4 frequently requires fewer prompt engineering iterations to achieve desired results. For tasks demanding mathematical reasoning, logical puzzles, or intricate tool-use chains, GPT-4 maintains an edge.

Furthermore, the existing ecosystem plays a significant role. If your workflow is already integrated with OpenAI's infrastructure, including services like DALL-E or Whisper, switching models mid-project can introduce unnecessary friction and integration challenges.

Cost: More Nuanced Than It Appears

Claude’s pricing is approximately $3 per million input tokens and $15 per million output tokens. GPT-4 Turbo comes at a higher nominal cost—$10 for input and $30 for output. However, GPT-4 often requires fewer tokens to accomplish the same task due to its more efficient reasoning. Therefore, it's crucial to perform a detailed cost analysis based on your specific workload before making a final decision.

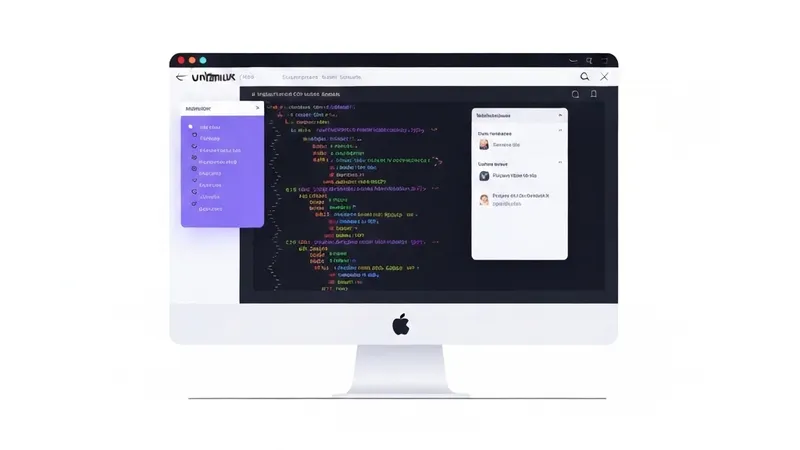

Here’s a practical configuration snippet for A/B testing both models within your monitoring setup:

models:

claude:

provider: anthropic

model: claude-3-5-sonnet

max_tokens: 4096

temperature: 0.7

cost_per_1m_input: 3.00

cost_per_1m_output: 15.00

gpt4:

provider: openai

model: gpt-4-turbo

max_tokens: 4096

temperature: 0.7

cost_per_1m_input: 10.00

cost_per_1m_output: 30.00Practical Decision Framework

Choose Claude if:

- You require extensive context (e.g., RAG over large documents).

- Your tasks involve structured data extraction.

- Cost efficiency is prioritized over deep reasoning.

- You prefer robust content moderation and safety defaults.

Choose GPT-4 if:

- You need advanced reasoning and Chain-of-Thought capabilities.

- Your prompt engineering is already optimized for OpenAI's style.

- Integration with other OpenAI services is essential.