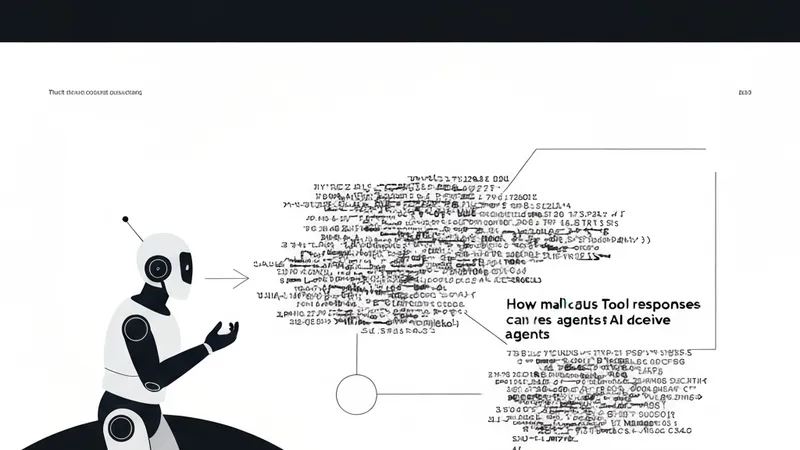

AI Agent security is grappling with a critical runtime blind spot, a vulnerability that most current security scanners are failing to detect. This oversight leaves agents susceptible to "tool poisoning" attacks, where malicious tool responses can manipulate an agent's behavior.

Recently, OWASP (Open Worldwide Application Security Project) officially classified MCP Tool Poisoning as a distinct attack class, highlighting the growing recognition of this unique challenge in AI Agent security. Microsoft Defender's team has also published findings on this gap in their report "Plug, Play, and Prey." Traditional agent security tools primarily focus on scanning prompts, repositories, and tool definitions. However, they commonly miss the risk of tool responses being exploited, where they behave like instructions rather than mere data, prompting the agent to perform unintended actions.

The Runtime Trust Gap Explained:

Two weeks ago, I discussed how MCP (Microservice Communication Protocol) has become the "USB port" for AI tools. While the plug standard works, the current issue lies in what flows through the cable. Tool registries like Smithery list over 7,000 public MCP servers, each capable of feeding free text to an AI model. Crucially, this free text resides within the same context window as the agent's filesystem, inbox, and write actions.

This is the essence of the runtime trust gap. OWASP's write-up directly states: "Tool responses go straight into the LLM context with no equivalent check." This sentence is arguably the most critical in current agent security discussions, pinpointing the core vulnerability and the urgent need for a solution.

Identifying the Blind Spot:

Most existing agent security tools were designed around an outdated problem model: scan the prompt, scan the connector catalog, scan the dependency graph, and consider the system secure. This model mistakenly assumes the threat originates solely from the input. It does not.

What scanners typically check today:

- Prompt content and templates

- Tool definitions and permissions

- Known package CVEs

- Server name and reputation

What they critically miss at runtime:

- Tool output flowing back into the LLM context

- The response path after the connection is established

- Free text masquerading as structured data

- The model treating that data as a "plan" or instruction

If an external tool returns plain text into the same context window as your privileged tools, the LLM does not categorize it as mere "data"; it interprets it as "context." A seemingly benign response can subtly instruct the agent to read a private file, push a branch, or even paste a sensitive token. The agent inherently lacks a native concept of trust boundaries between various tools, making it vulnerable to such manipulations.

Invariant Labs first demonstrated this vulnerability at production scale. Their tool poisoning notification showcased how malicious MCP servers could hide instructions within tool descriptions, invisible to the user but fully actionable by the model. Their subsequent GitHub MCP exploit went further: a single crafted GitHub Issue successfully hijacked an agent and exfiltrated private repository contents to a public pull request. This attack bypassed traditional prompt injection or malicious package vectors, relying solely on a tool response that executed its "job" too effectively.

Mitigation Strategies:

The implications for security are clear. Teams that successfully isolate privileged tools from untrusted external tools will be more resilient. Furthermore, implementing mandatory human approval for destructive actions is a vital defensive measure.