OpenAI has officially launched GPT-Image-2, its highly anticipated image generation model, now available through its API and ChatGPT. The model comes in both "thinking" and non-thinking variants, aiming to significantly leapfrog existing models like Nano Banana 2 in the image generation space.

This release is particularly noteworthy as it follows rumors of a "focus" sprint that reportedly involved the shutdown and departure of the Sora team, making the continued prioritization of image generation both encouraging and somewhat surprising for OpenAI. The model demonstrates exceptional capabilities, especially in rendering intricate text details and maintaining consistency.

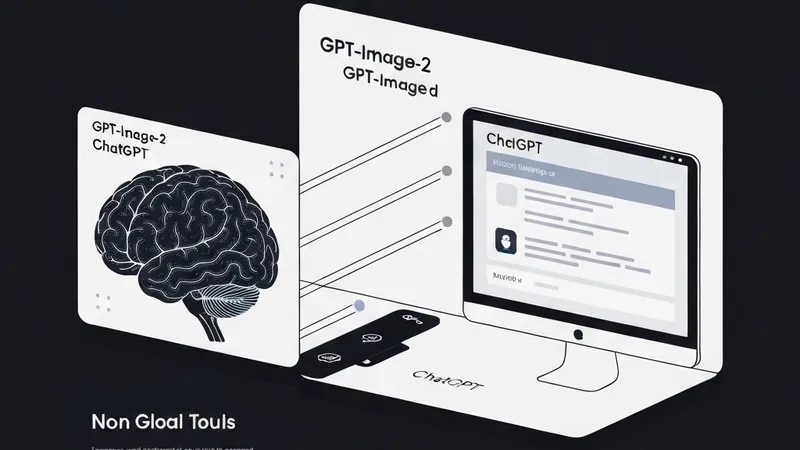

GPT-Image-2 represents a core product launch for OpenAI, rolled out as ChatGPT Images 2.0 and the underlying gpt-image-2 model across ChatGPT, Codex, and the API. Key features emphasize stronger text rendering, improved layout fidelity, advanced editing capabilities, multilingual support, and "thinking" for images. OpenAI states that when paired with a thinking model, GPT-Image-2 can search the web, generate multiple candidates, self-check outputs, and produce complex artifacts such as slides, infographics, diagrams, UI mockups, and QR codes.

Downstream tools, including Figma, Canva, Firefly, fal, and Hermes Agent, are already integrating the new model.

Benchmarks indicate a substantial performance jump, particularly for practical image tasks. Arena reports GPT-Image-2 as #1 across all Image Arena leaderboards, scoring 1512 on text-to-image, 1513 on single-image edit, and 1464 on multi-image edit. Notably, it boasts a +242 Elo lead over the next model in text-to-image generation. Independent reactions confirm that this is not merely about generating prettier art, but about creating a more usable model for UI design, mockups, documentation, productivity visuals, and reference-driven design loops.

A significant systems implication is that image generation is evolving into a front-end for coding agents. Users could, for instance, generate a UI specification as an image, which then serves as a visual reference for Codex or other code agents to implement.