OpenAI has provided an explanation for a peculiar issue where its AI models began frequently referencing mythical creatures like goblins and gremlins. Following a report that revealed instructions given to OpenAI’s coding model to "never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures," the AI startup published an explanation, attributing the references to these creatures as a "strange habit" developed during their training.

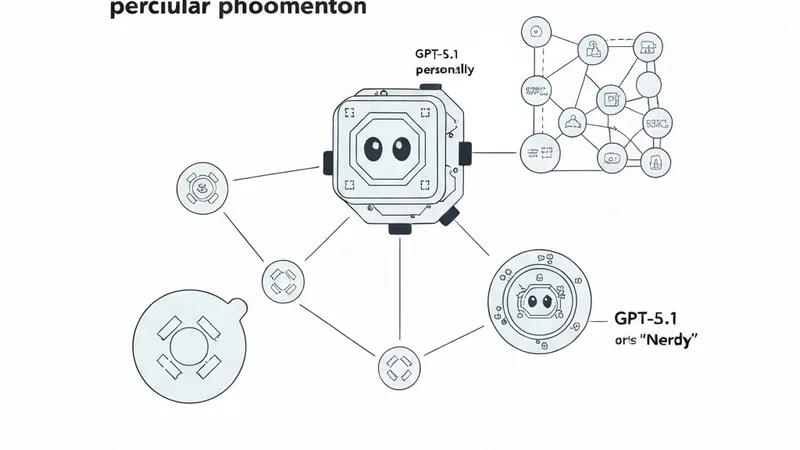

As outlined in a blog post, OpenAI began noticing metaphors referencing goblins and similar creatures with the release of its GPT-5.1 model, specifically when using the “Nerdy” personality option. The company observed that this problem continued to worsen with subsequent model releases. It was eventually determined that its reinforcement training inadvertently rewarded these quirky metaphors within the "Nerdy" condition, and newer models were subsequently trained on this reinforced behavior.

OpenAI clarified that while these rewards were applied only in the "Nerdy" condition, reinforcement learning does not guarantee that learned behaviors remain neatly scoped to the condition that produced them. Once a stylistic tic is rewarded, later training can spread or reinforce it elsewhere, especially if those outputs are reused in supervised fine-tuning or preference data.

Although references to goblins and gremlins dropped off after OpenAI discontinued the "Nerdy" personality in March, they did not disappear completely with GPT-5.5 within its Codex coding tool. This was because the training for GPT-5.5 commenced before the "root cause" was fully identified. Consequently, the company had to provide Codex with very specific instructions to avoid mentioning these mythological creatures. However, for users who might prefer their AI-generated code to include such whimsical elements, OpenAI has also shared a method to reverse these specific instructions.