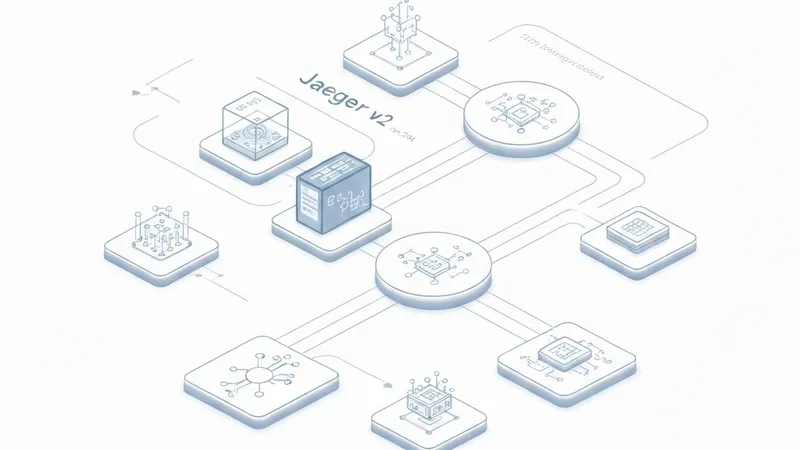

AI agents, by nature, are distributed systems involving numerous asynchronous operations like LLM calls, tool invocations, memory lookups, and multi-step reasoning loops. Historically, observability tools struggled to provide a holistic view, often limited to logs and dashboards without comprehensive tracing across an entire agent run. Jaeger v2, with its foundational shift, directly addresses this critical gap.

Released in late 2024, Jaeger v2 underwent a complete internal architectural overhaul, adopting the OpenTelemetry Collector framework as its core. This fundamental change brings several practical advantages:

- Native OTLP Ingestion: Eliminates the need for a translation layer from OTLP to Jaeger's internal format, ensuring telemetry data flows in as-is without loss from conversion.

- Single Binary, OTel-Native Configuration: The previous multi-component setup (jaeger-agent, jaeger-collector, jaeger-ingester, jaeger-query) is consolidated into a single binary, configured using the same YAML model as the OTel Collector.

- Full OpenTelemetry Collector Ecosystem Access: Jaeger v2 now inherently benefits from the extensive OTel Collector ecosystem, including tail-based sampling (now a first-class feature via the upstream OTel contrib processor), span-to-metric connectors, PII filtering processors, and Kafka pipelines.

Crucially, this architectural shift means Jaeger v2 natively supports OpenTelemetry's new GenAI semantic conventions. OpenTelemetry is actively developing these conventions to standardize how AI workloads are represented in traces. They define specific data structures for:

- Model Spans: Individual LLM inference calls, capturing details like token counts, model name, and latency.

- Agent Spans: Higher-level reasoning loops and orchestration steps within an agent.

- Events: Key occurrences such as prompt inputs, LLM completions, and tool call results.

- Metrics: Aggregate data on token usage, latency distributions, and error rates.

These conventions are already being implemented for major providers like OpenAI, Anthropic, AWS Bedrock, and Azure AI Inference. Furthermore, a draft for the Model Context Protocol (MCP) ensures that tool calls made via MCP-compatible servers can be traced as first-class spans. Although still in "Development" status, instrumentation is actively shipping, with libraries such as LangChain, LlamaIndex, and OpenAI's own SDKs beginning to emit OTel-compatible telemetry. Jaeger v2's native OTLP support allows it to seamlessly receive and process all this data.

For teams developing AI agents, this integration is transformative. The traditional distributed tracing challenge of identifying slow microservice hops now extends to tracing complex agent workflows: from a user prompt through agent planning, multiple LLM calls, tool invocations, retries, and branching logic, all culminating in a final response. Without proper trace context propagation and standardized conventions, these intricate processes remain opaque "black boxes." Jaeger v2 and OTel GenAI conventions provide the crucial visibility needed to understand, debug, and optimize AI agent behavior.