For the past week, we've focused on building AI applications where every step was predefined. But what if the steps aren't known in advance? What if the AI needs to dynamically decide whether to search Google, consult a database, or use a calculator based on the user's query?

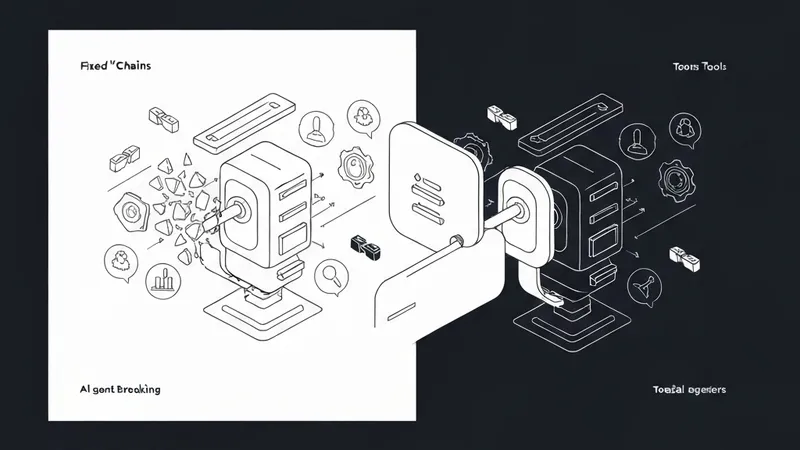

This scenario marks the transition from static 'Chains' to dynamic 'Agents.' While a Chain follows a fixed railroad track, an Agent operates more like a self-driving car: it has a destination (your goal), a set of tools, and the autonomy to determine the most effective route to get there.

What Exactly is an AI Agent?

An Agent is essentially a Large Language Model (LLM) that employs a 'Reasoning Loop' to accomplish a given task. This pattern is frequently referred to as the ReAct pattern (Reason + Act) in official documentation. Instead of simply generating a response, the Agent cycles through the following process:

- Thought: "The user is asking for the current Bitcoin price. I don't possess this information, so I should utilize a search tool."

- Action: Executes the Search tool.

- Observation: Parses and interprets the results returned by the search tool.

- Final Response: Synthesizes the observation into a comprehensive and helpful answer for the user.

The Power of Tools

Tools serve as the 'hands' of your AI. Conceptually, a tool is a Python function that the LLM is trained to identify and invoke when appropriate. LangChain offers a rich collection of built-in tools, and developers also have the flexibility to create custom tools tailored to specific needs.

Commonly used tools include:

- Tavily/Google Search: Essential for accessing real-time, up-to-the-minute information.

- Wikipedia: Provides access to a vast repository of general knowledge.

- Python REPL: Enables the execution of complex mathematical computations or sophisticated data analysis tasks.

- Custom APIs: Facilitate seamless integration with proprietary company databases or internal systems.

Building Your First Agent (The Modern Approach)

In the contemporary LangChain framework, a specialized 'Agent Executor' is employed to manage the reasoning and action loop. Here's a brief illustrative setup:

from langchain_openai import ChatOpenAI

from langchain_community.tools.tavily_search import TavilySearchResults

from langchain.agents import create_react_agent, AgentExecutor

from langchain import hub

# 1. Define the Tools available to the agent

tools = [TavilySearchResults(max_results=1)]

# 2. Retrieve a 'Prompt Template' from the LangChain Hub

# This prompt specifically instructs the AI on how to effectively utilize its tools.

prompt = hub.pull("hwchase17/react")

# 3. Initialize the Brain (The underlying LLM)

llm = ChatOpenAI(model="gpt-4o")

# 4. Construct the Agent by combining the LLM, tools, and prompt

agent = create_react_agent(llm, tools, prompt)

# 5. Create the Executor, which is the engine responsible for running the agent's loop

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True)

# 6. Execute the agent with an input query

agent_executor.invoke({"input": "What is the current stock price of NVIDIA?"})

Key Takeaways

Today's discussion covered:

- Agents: AI systems capable of reasoning and autonomously determining their operational path.

- Tools: The mechanism by which LLMs gain access to and interact with the external world.

- The ReAct Loop: The cognitive process AI follows to think before executing actions.

- Agent Executor: The runtime environment that orchestrates and manages the entire agent process.