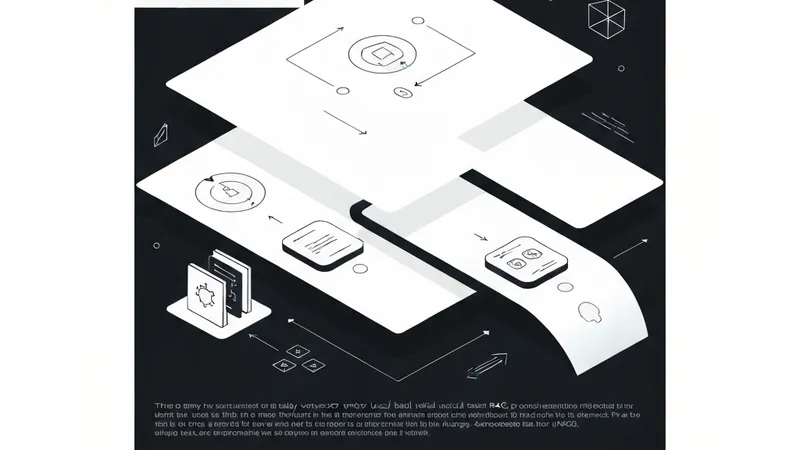

In the context of Retrieval-Augmented Generation (RAG) systems, precisely retrieving relevant information from vast document collections is a core challenge. Traditional RAG approaches often rely solely on vector databases for semantic similarity retrieval, while lexical search focuses on exact keyword matches. However, both methods have their limitations, leading to the development of hybrid search to combine their strengths.

Consider a scenario with documents containing Python error codes, their definitions, and use-cases. When a user queries, "What is ERR_404_AUTH?"

- Classic RAG (Vector Search): Would retrieve all authentication and error-related context from the vector database, potentially including broad, less specific information.

- Lexical Search: Would search for exact terms like ["What", "is", "ERR_404_AUTH"], but might miss semantically similar documents with different phrasing.

- Hybrid Search: Combines searching for the keyword "ERR_404_AUTH" with retrieving semantically similar documents using similarity search, resulting in more precise matches.

Utilizing BM25 for Keyword Search

BM25 serves as an extended version of TF-IDF for keyword-based search. Implementing BM25Retriever in LangChain is straightforward due to its built-in functionality. Here's a step-wise implementation showcasing its intuition:

# pip install rank_bm25

from langchain_community.retrievers import BM25Retriever

from langchain_core.documents import Document

# Chunks from your text splitter

chunks = [

Document(page_content="The AX-705 engine uses a 4-stroke cycle."),

Document(page_content="Maintenance for AX-705 requires synthetic oil."),

Document(page_content="Four-stroke engines are common in modern cars.")

]

# Step: Build the BM25 index (The Inverted Index)

bm25_retriever = BM25Retriever.from_documents(chunks)

bm25_retriever.k = 2 # Retrieve top 2Creating the Hybrid "Ensemble" Retriever

To leverage both the precision of exact keywords and the generality of semantic meaning, you can merge your vector retriever with the BM25 retriever using LangChain's `EnsembleRetriever`.

from langchain.retrievers import EnsembleRetriever

# Assume 'chroma_retriever' is already created from your syllabus

hybrid_retriever = EnsembleRetriever(

retrievers=[bm25_retriever, chroma_retriever],

weights=[0.3, 0.7] # 30% importance to Keywords, 70% to Meaning

)The 4 Steps of BM25 (Under the Hood)

When you call `hybrid_retriever.invoke("AX-705 engine")`, the BM25 component follows these steps:

- Tokenization: The query "AX-705 engine" is split into tokens, for instance, ["ax-705", "engine"].

- Lookup: The retriever consults its "Inverted Index" (a dictionary) to identify document chunks containing these exact strings.

- Scoring (f(q, d)): A score is calculated for each match using the BM25 formula, considering:

- Rareness: Terms like "AX-705," being rare in the database, contribute more points than common terms like "engine."

- Saturation: The score for a term doesn't skyrocket even if it appears many times in a document, preventing "keyword stuffing" from disproportionately influencing rankings.

- Length Penalty: A shorter document chunk (e.g., 10 words) matching both query words ranks higher than a much longer one (e.g., 1000 words) that also matches both.

- Ranking: A list of document chunks sorted by their calculated scores is returned.