In this discussion, you will learn how to harden a semantic cache for Large Language Models (LLMs), a crucial LLMOps pattern for reducing redundant inference costs. We aim to transform a functional semantic caching prototype into a robust system capable of surviving real-world usage, focusing on TTL (Time-To-Live) validation, confidence scoring, query deduplication, and cache poisoning prevention.

While a basic semantic cache can work end-to-end—correctly avoiding redundant LLM calls, reusing responses for identical queries, and even handling paraphrased inputs via semantic similarity—this is merely the starting point for real-world systems. A semantic cache that functions perfectly under ideal conditions can fail in subtle and dangerous ways when exposed to real users, long-running processes, and evolving information. These failures typically manifest not as crashes or explicit errors, but as silent correctness issues, degraded user trust, and unpredictable behavior over time.

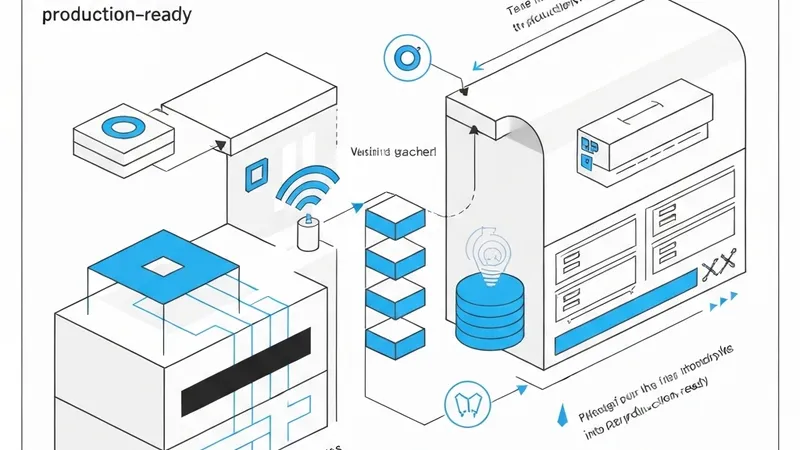

Initial implementations often focus on the correctness of flow: requests progress through exact match → semantic match → LLM fallback (generation); cached responses are reused appropriately; and the system is observable and debuggable without hidden abstractions. However, long-term safety is often overlooked. We have not yet addressed critical questions such as:

- How old is a cached response, and should we still trust it?

- What happens if the LLM returns an error or partial output?

- What if the cache gradually fills with duplicates?

- What if similarity is high, but the semantic context is critically different?

These questions highlight the challenges that must be overcome to evolve semantic caches from prototypes into reliable, production-ready systems that maintain integrity and performance under diverse operational conditions.