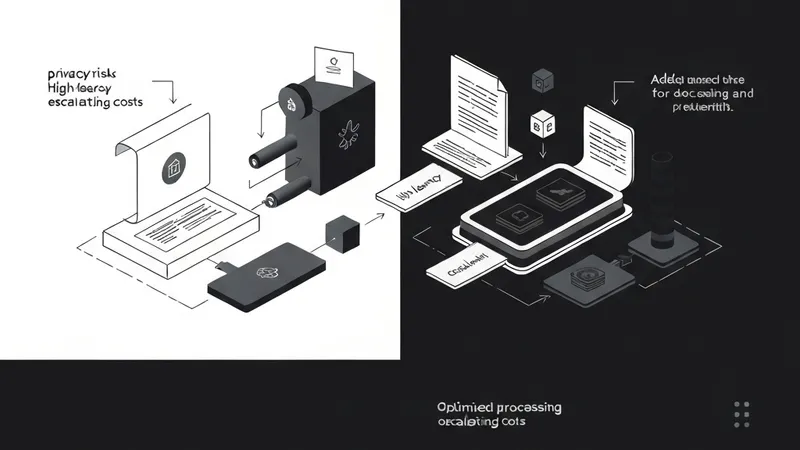

The "Round Trip" represents a hidden cost in modern application development. For years, the prevailing belief was that any intelligent operation—such as extracting data from a receipt, summarizing a medical report, or parsing an invoice—necessitated a journey to the cloud. This process involves bundling a file, uploading it to a server, awaiting processing by a large language model (LLM) like GPT-4 or Gemini Pro, and then downloading the result.

While powerful, this architecture comes with significant drawbacks: compromised user privacy, dependency on network stability, and a linear increase in API costs.

However, the mobile development landscape is evolving. With the advent of Gemini Nano and AICore, Android developers can now deploy the core intelligence directly onto the device. This deep dive explores how to implement a production-grade Document Parsing Engine that operates entirely on-device, leveraging modern Kotlin features and the latest GenAI system services.

The Philosophy of On-Device Document Parsing

At its essence, a Document Parsing Engine is a pipeline designed to convert unstructured data—such as a PDF, a screenshot of a receipt, or a handwritten note—into structured, machine-readable formats like JSON or Kotlin Data Classes.

Moving this intelligence to the edge is more than a technical feat; it's a strategic design choice underpinned by three fundamental pillars:

1. Data Sovereignty and Privacy

In an era of frequent data breaches, users are increasingly concerned about their documents. Sensitive information like medical records, financial statements, and personal IDs are not meant to float through third-party servers. By utilizing Gemini Nano, sensitive data remains within the device's Trusted Execution Environment (TEE). The intelligence is brought to the data, rather than the data traveling to the intelligence.

2. Zero Latency and Real-Time Feedback

Network hops are detrimental to a fluid User Experience (UX). Eliminating cloud dependency enables "live extraction." Imagine a user pointing their camera at a document and seeing fields like "Total Amount" or "Due Date" populate instantly as they move the device. This level of responsiveness is exclusively achievable through local inference.

3. Scaling Without the Bill

Cloud-based LLMs typically charge per token. If an application scales to a million users parsing ten documents daily, operational expenses can skyrocket. On-device AI utilizes the user's local hardware (NPU, GPU, TPU). Once the model is deployed, the cost of each additional inference session for the developer is effectively zero.

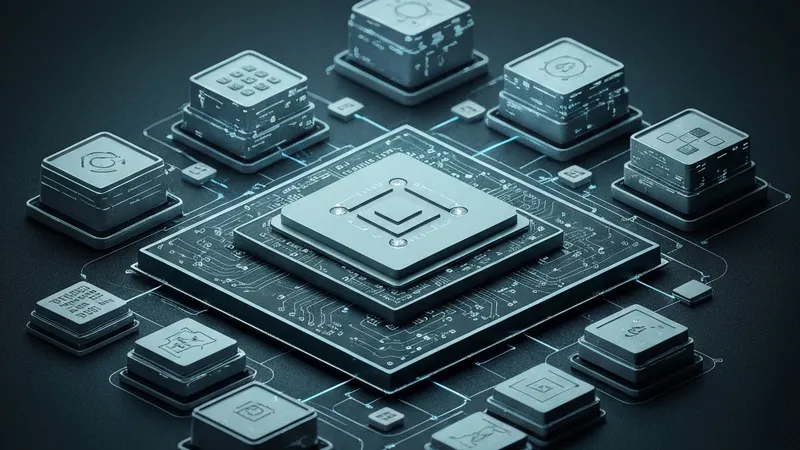

AICore: The System-Level AI Provider

To build this engine, understanding AICore is crucial. In the early days of mobile AI, developers had to bundle .tflite models directly within their APKs. This presented significant storage challenges; if five different apps used the same model...