DeepSeek has recently unveiled two advanced large language models: the flagship DeepSeek-V4-Pro 1.6T, boasting an impressive 1.86 trillion parameters, and the efficient DeepSeek-V4-Flash 284B, with 284 billion parameters.

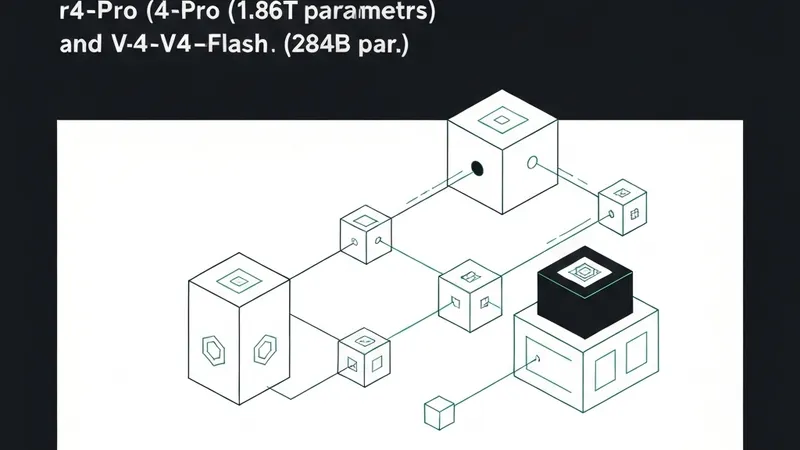

In response to DeepSeek's latest innovations, "FlagOS," an AI operating system led by the Beijing Academy of Artificial Intelligence (BAAI), promptly completed full adaptation for both models. Specifically, FlagOS has successfully achieved day-zero full adaptation and inference deployment of the DeepSeek-V4-Flash model on over eight leading AI chips. The supported hardware platforms encompass a range of domestic and international accelerators, including Haiguang, Muxi, Huawei Ascend, Moore Threads (with FP8 support), Kunlunxin, T-Head Zhenwu, Tianshu, and NVIDIA (with FP8 support).

Furthermore, the FlagOS team is actively progressing with the migration and adaptation of the DeepSeek-V4-Pro model across multiple chips. These adaptation efforts are slated for future open-sourcing, which is expected to significantly enhance the integration of large models with diverse AI hardware ecosystems and bolster the development of independent and controllable AI computing infrastructure, particularly within China.