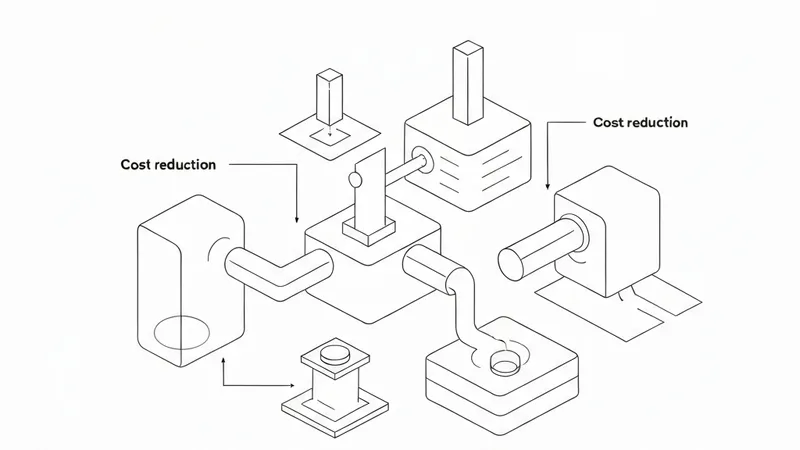

The DeepSeek V4 technical report has recently become a focal point of discussion within the AI industry. Diverging from the conventional “brute force aesthetics” of scaling laws that prioritize massive compute and parameter counts, V4 introduces a “restrained aesthetics” approach to model training. This strategy involves a series of combinatorial optimizations and architectural redesigns, including advanced attention mechanisms (CSA+HCA), Mixture-of-Experts (MoE) architecture, post-training refinements, and inference system engineering. These innovations have dramatically reduced the computational power required for V4-Pro to handle 1 million token long-context tasks to just 27% of its predecessor, V3.2, while compressing the KV cache by 90%.

However, evaluating a model's true value extends beyond paper specifications and benchmarks. To this end, we invited nearly 10 developers, application entrepreneurs, and investors to test and experience V4 over three days. A counter-intuitive conclusion emerged: DeepSeek V4's impact on the application layer might be more significant than on the model layer itself. While its engineering optimizations are impressive, DeepSeek's own report acknowledges that its development trajectory lags behind frontier closed-source models by approximately 3 to 6 months. V4 achieves enhanced reasoning and Agent capabilities by making a trade-off, sacrificing a degree of accuracy.

For commercial applications demanding high stability and precision, V4 is not yet a direct out-of-the-box solution. Li Bojie, Chief Scientist at Pine AI, and Chillin, a leading Coding Agent startup founder, both stated that V4's tool-calling stability and hallucination rate require mitigation through “harness” layers. This implies that robust scaffolding is essential for its practical deployment. The rapid iteration of foundational model performance also poses challenges for application startups, highlighting that future AI application moats will depend on integrating models, agents, product scenarios, and data feedback into reliable, cost-effective, and scalable production systems.

Core Strengths: Exceptional Code and Agent Capabilities, Cost-Efficiency, and Open-Source Commitment

DeepSeek V4-Pro demonstrates the highest level among current open-source models in several critical coding and software engineering benchmarks, performing nearly on par with top closed-source models. Its true strength lies in combining high capability with low cost, alongside a steadfast commitment to open-source principles.

Huang Dongxu, Co-founder and CTO of PingCAP: He has migrated his daily workflow (including email organization, article writing, calendar management, content summarization, and web browsing) from more expensive Claude Opus and GPT models to DeepSeek V4 Pro. Huang noted that V4's Chinese optimization and overall language proficiency better suit native Chinese speakers, and its capability is comparable to Claude Sonnet 4.5-4.6, yet at less than a quarter of the cost of leading models. This drastically reduces agent operational expenses and offers greater security against potential service disruptions from closed-source providers. Regarding coding, V4 performs well with projects involving a few thousand lines of code or complex third-party API integrations, showing a high one-shot success rate for tasks ranging from a few thousand to ten thousand lines. He believes that if stronger models (like GPT 5.5-level) can guide DeepSeek V4 Pro, with V4 handling execution, overall Agent engineering costs could significantly decrease.

Zhao Binqiang, VP of Technology and Product Center at 01.AI: He regards DeepSeek V4 as not the “most versatile” but the “most trustworthy” foundational model for B2B scenarios, owing to its firm open-source commitment, comprehensive technical reports, extremely low inference costs, and full-stack domestic compute adaptation. Zhao highlighted two impressive aspects: first, the underlying architectural innovation, specifically the mixed attention mechanism (CSA+HCA) maintaining high-quality reasoning with a 1 million token context window, and its advanced exploration in context compression, with details openly disclosed. Second, its successful adaptation to Huawei Ascend 910B/950, with meticulous work in quantization, sparsity mechanisms, and domain-expert optimization. This marks a substantial step towards a full-stack domestic solution, moving closer to reducing reliance on the Nvidia ecosystem.

Li Bojie, Chief Scientist at Pine AI: He expressed particular amazement that DeepSeek V4 successfully implemented a complex array of architectural innovations—including MoE, CSA+HCA mixed attention, mHC, Muon, and FP4QAT—at the 1.6 trillion parameter scale, currently the largest for an open-source model.