This analysis provides a compelling comparison of data science workflows separated by seven years of technological advancement. By tasking Claude Code with recreating a complex video game sales analysis originally performed in 2019, the author demonstrates the staggering productivity boost offered by modern AI agentic tools. It serves as an inspiring look at how developers can now leverage AI to overcome traditional coding hurdles and focus on higher-level insights.

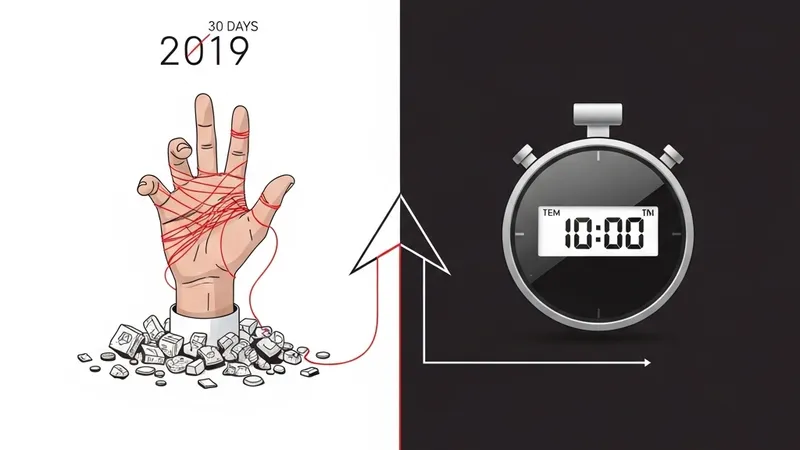

The core of the experiment revolves around a specific data science project. Back in 2019, the author spent an entire month navigating manual trial-and-error processes to analyze video game market trends. In 2026, using Claude Code, the exact same scope of work—actually involving a significantly more complex dataset—was finished in approximately 10 minutes. This represents a paradigm shift in how technical work is executed.

The technical demands of the modern version were notably higher. The 2024 dataset utilized in the new workflow was roughly four times larger than the 2019 version. Furthermore, it featured richer attributes, including developer information and professional critic scores, adding layers of complexity to the cleaning and correlation analysis phases. Despite these challenges, the AI handled the increased data volume without a performance hit.

In terms of workflow, the author simply prompted Claude Code to analyze the new CSV file. The agent autonomously handled data ingestion, identified relevant patterns, and generated insights. This removes the "blank page" problem and the "debugging loop" that often stalls data scientists. Instead of writing boilerplate code for data visualization and statistical testing, the developer moves directly to interpreting results, illustrating the role of AI as a co-pilot that manages the execution layer.