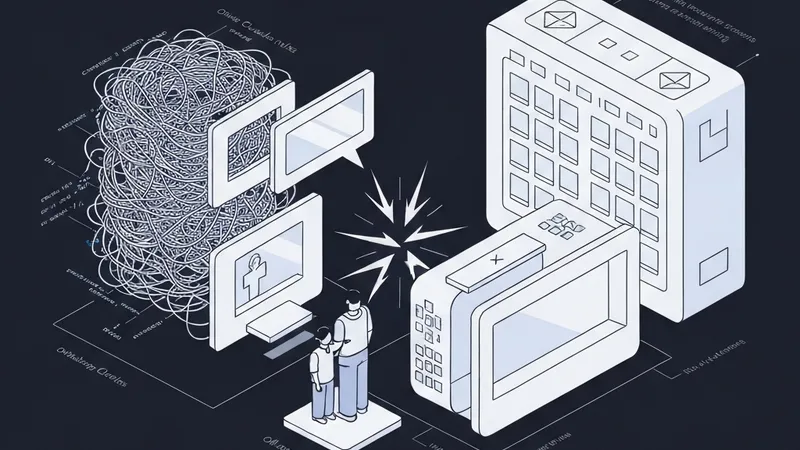

In a mature AI Agent project, the .claude/rules/ directory often contains numerous instructions tailored for previous model versions. While newer frontier models naturally handle most of these default behaviors correctly, these legacy rules persist. They not only consume valuable context tokens with every message but can also occasionally conflict with the model's improved defaults.

A significant challenge is the lack of auditing for these rules, largely because there's no agreed-upon definition of what "auditing a prompt" truly entails.

To address this, this post proposes a solution: every persistent rule should carry two mandatory tags:

- WHY: Specifies what default model behavior the rule corrects, along with the model version where this behavior was observed.

- Retire when: Defines the observable condition under which the rule is no longer necessary.

When a new frontier model is released, developers can conduct a brief audit against these rules' retirement conditions and archive those that no longer apply. This isn't a revolutionary concept; rather, it's a small, deliberate cost invested in each rule to ensure prompts decay cleanly instead of silently accumulating debt.

The author currently implements this pattern within the github.com/aman-bhandari/claude-code-agent-skills-framework project. Presently, four rules there incorporate these tags, with the remainder being incrementally extended.

The Unnamed Failure Mode

Without this disciplined approach, the following scenario typically unfolds:

Imagine a project six months in. Your .claude/rules/ directory has grown organically; each time Claude Code exhibited an undesirable behavior, a rule was added to prevent it. Examples include: "always run tests before committing," "don't use the word 'seamless' in documentation," "prefer explicit type hints over inferred types," or "when asked a question, state what you're about to do before tool calls." This continues for rules 5 through 30, and beyond.

Then, a new Claude model ships—say, Sonnet 4.7. It demonstrates improved Python capabilities; it already prefers explicit type hints, narrates its actions, and avoids marketing language without explicit instruction. Despite this, your existing rule file remains, dutifully loaded with every message, directing the model to perform tasks it would accomplish anyway. This not only occasionally clashes with its newly-improved defaults but also consumes hundreds of context tokens with each request.

Such issues often go unnoticed. These rules, perhaps originating from the Sonnet 4.5 era, become "furniture"—permanent fixtures. No one conducts an audit, and every new session inherits this accumulated debt. This phenomenon is termed "scaffolding debt": rules designed for yesterday's model now act as critical infrastructure. It represents the prompt-engineering equivalent of legacy code, where deletion is feared because the original rationale has been forgotten.

The Shape of the Fix

To ensure effective prompt governance, every rule you add should carry two mandatory tags:

- WHY: Specifies the default model behavior this rule corrects, along with the model version where the behavior was observed.

- Retire when: Defines the observable condition under which the rule is no longer needed.

Without both tags, a rule becomes unfalsifiable and undeccayable. This is crucial because a rule that doesn't name the bad behavior it corrects cannot be properly tested for its continued relevance or redundancy with newer model versions.