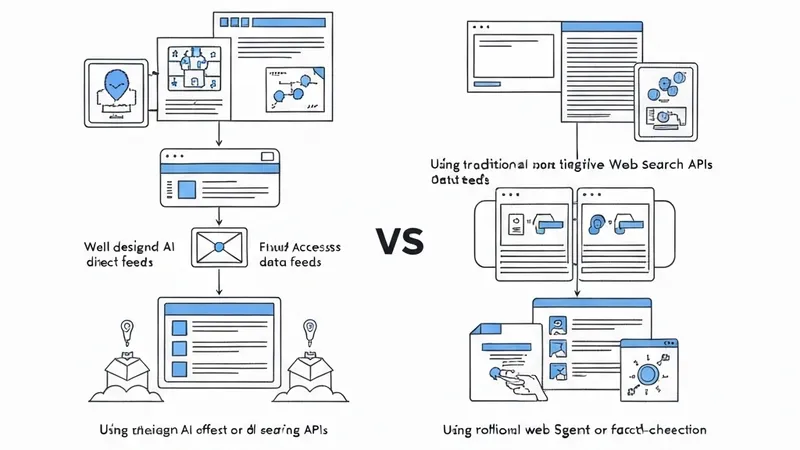

For AI agents built for research, fact-checking, or competitive intelligence, access to current and reliable web information is critical. However, most general-purpose search APIs are not designed for agent workflows. They typically return HTML-heavy pages and short snippets optimized for human browsing, rather than structured data that an agent can directly consume. Consequently, developers often need to build additional layers, custom crawlers, parsers, and ranking logic to transform this content into a usable format for agent workflows.

The Exa integration for the Strands Agents SDK addresses this gap by providing an AI-native search and retrieval layer directly within the tool interface. Exa delivers clean, structured content formatted for direct use in Large Language Model (LLM) context windows, eliminating the need for post-processing to strip markup or reformat output. Combined with the Strands Agents SDK’s model-driven architecture, where the model decides when to invoke tools and how to use their outputs, agents can seamlessly integrate real-time web knowledge into their reasoning loops.

Practically, an agent accesses this integration through two core tools: exa_search, which performs semantic search with support for categories like news, research papers, and repositories; and exa_get_contents, which retrieves full content from selected URLs. These tools enable agents to perform multi-step tasks by dynamically acquiring and processing web-based information.

The Strands Agents SDK is an open-source framework from AWS for building AI agents using a model-driven approach. Instead of hard-coding workflows, developers provide a model, a system prompt, and a list of tools. The model autonomously decides the next action: which tools to call, in what order, and when the task is complete. At its core is the agent loop. In each iteration, the model receives the full conversation history, including all prior tool calls and their results. If more information is needed, the model requests a tool; Strands Agents executes it and feeds the result back. This loop continues until the model produces a final answer. This accumulation of context across iterations empowers agents to tackle multi-step tasks that go beyond what a single LLM call can handle.

The Strands Agents SDK ships with over 40 pre-built tools covering file I/O, shell execution, web search, AWS APIs, memory, and code execution, among others. It also supports the Model Context Protocol (MCP), making tools exposed by MCP servers available to an agent without additional integration effort. Adding new tools, including the Exa web search tools, follows a simple pattern: developers just add them to the tools=[] list, and the model learns how to use them.