A recent evaluation of 17 open-source large language models (LLMs) on a set of elementary questions revealed significant failures, with some models confidently providing incorrect answers. This finding raises critical concerns about AI reliability, particularly given the identical confidence levels observed in both accurate and erroneous outputs.

The Setup

The test comprised six unambiguous questions, each with a single correct answer. These included:

- What is 7 times 8?

- A train goes 60 mph for 2.5 hours. How many miles?

- All cats are animals. All animals breathe. Do cats breathe?

- How many months have at least 28 days?

- What is 12 times 12?

- What is the square root of 9?

All models were run locally via Ollama on a single workstation. The temperature parameter was set to 0 to ensure deterministic outputs, and the system prompt was "Answer with only the number." Each model underwent three independent runs for every question.

Who Passed

Ten models achieved a perfect score of 18/18 across all three runs:

- gemma3:12b (Google, 12.2B parameters)

- phi4 (Microsoft, 14.7B parameters)

- llama3.1:8b (Meta, 8B parameters)

- gemma2:9b (Google, 9.2B parameters)

- aya:8b (Cohere, 8B parameters)

- yi:9b (01.AI, 9B parameters)

- ministral-3:8b (Mistral AI, 8B parameters)

- ministral-3:3b (Mistral AI, 3B parameters)

- command-r (Cohere, 35B parameters)

- llama3.2:3b (Meta, 3.2B parameters)

Who Failed (and How)

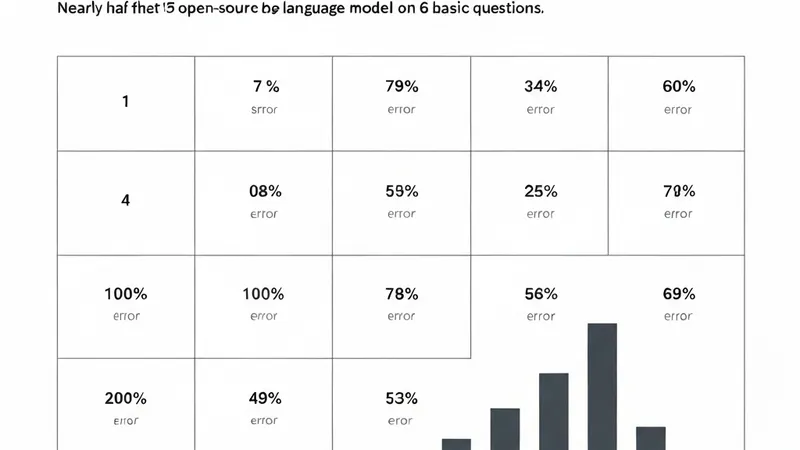

Conversely, six models failed at least one question, with two models scoring 0 out of 6:

- NVIDIA nemotron-mini (4.2B): Scored 15/18. This model deterministically failed the logic syllogism, incorrectly answering "No" to "Do cats breathe?" across all runs, despite correctly handling basic multiplication.

- Mistral 7B: Also scored 15/18. It consistently answered "7" to "How many months have at least 28 days?" (correct answer is 12). The model appears to misinterpret the question as "how many months have exactly 28 days."

- Alibaba qwen3:4b and DeepSeek deepseek-r1:7b: Both models scored 0/18. These "reasoning" models, which utilize internal chain-of-thought, consumed their entire token budget without returning any answer, resulting in empty responses for all questions.

- AI21 jamba_reasoning: Scored 17/18. This model failed the logic syllogism in one of its three runs. Crucially, despite a temperature setting of 0 (intended for deterministic output), it produced inconsistent answers, indicating a problematic lack of stability under identical conditions.

The Core Reliability Challenge

The most significant concern highlighted by this test is the indistinguishable confidence between correct and incorrect responses. For instance, when asked "Do cats breathe?", phi4 responded "Yes, all cats breathe," while nemotron-mini stated "No." Both answers were delivered without any hedging or indication of uncertainty.

This means that users cannot discern the correctness of an answer based solely on the model's output. The models fail to signal their internal uncertainty, nor do they appear to recognize when their answers are wrong. They present misinformation with the same conviction as factual information, posing a fundamental challenge to the trustworthy deployment of AI in critical applications.